[1]WANG Wenbo,ZHANG Zhifei,WANG Ruizhi,et al.Retrieval-augmented generation based on cluster reorganization and pre-parsing[J].CAAI Transactions on Intelligent Systems,2026,21(1):236-244.[doi:10.11992/tis.202506029]

Copy

Retrieval-augmented generation based on cluster reorganization and pre-parsing

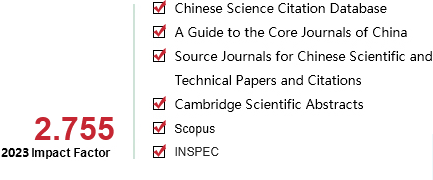

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

236-244

Column:

吴文俊人工智能科学技术奖论坛

Public date:

2026-01-05

- Title:

- Retrieval-augmented generation based on cluster reorganization and pre-parsing

- Keywords:

- deep learning; natural language processing; large language models; vector retrieval; question answering; retrieval-augmented generation; clustering algorithms; prompt engineering

- CLC:

- TP311.1

- DOI:

- 10.11992/tis.202506029

- Abstract:

- Retrieval-augmented generation(RAG) has garnered remarkable attention for its ability to provide external knowledge to large language models(LLM). However, existing RAG methods often struggle to simultaneously capture both local detailed knowledge and non-contiguous multi-hop knowledge within the original text. To address this issue, this study proposes a novel RAG method based on cluster reorganization and pre-parsing. In the indexing stage, clustering algorithms are used to group discontinuous but relevant knowledge into new chunks, enhancing the retrieval of multi-hop information. Furthermore, prompt engineering is applied to pre-parse these chunks, dividing them into finer-grained sub-units to improve recall during retrieval. In the retrieval stage, all retrieved chunks are restored to their original context blocks and, together with the query, are fed into the LLM to generate the final answer. Ablation and comparative experiments conducted on the QuALITY dataset demonstrate the effectiveness of the proposed method, achieving the best performance on the public leaderboard. The findings of this study provide valuable insights for improving indexing and retrieval technologies in RAG.

- References:

-

[1] 吴国栋, 秦辉, 胡全兴, 等. 大语言模型及其个性化推荐研究[J]. 智能系统学报, 2024, 19(6): 1351-1365 WU Guodong, QIN Hui, HU Quanxing, et al. Research on large language models and personalized recommendation[J]. CAAI transactions on intelligent systems, 2024, 19(6): 1351-1365

[2] BROWN T, MANN B, RYDER N, et al. Language models are few-shot learners[J]. Advances in neural information processing systems, 2020, 33: 1877-1901

[3] BAHRINI A, KHAMOSHIFAR M, ABBASIMEHR H, et al. ChatGPT: applications, opportunities, and threats[C]//2023 Systems and Information Engineering Design Symposium. Charlottesville: IEEE, 2023.

[4] BAI Jinze, BAI Shuai, CHU Yunfei, et al. Qwen technical report[EB/OL]. (2023-09-28)[2025-02-23]. http://arxiv.org/abs/2309.16609.

[5] YANG An, YANG Baosong, ZHANG Beichen et al. Qwen2.5 technical report[EB/OL]. (2025-01-03)[2025-02-22]. http://arxiv.org/abs/2412.15115.

[6] GUO Daya, YANG Dejian, ZHANG Haowei, et al. DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning[J]. Nature, 2025, 645(8081): 633-638

[7] RAFFEL C, SHAZEER N, ROBERTS A, et al. Exploring the limits of transfer learning with a unified text-to-text transformer[J]. Journal of machine learning research, 2020, 21(140): 1-67

[8] HUANG Lei, YU Weijiang, MA Weitao, et al. A survey on hallucination in large language models: principles, taxonomy, challenges, and open questions[J]. ACM transactions on information systems, 2025, 43(2): 1-55

[9] LEWIS P, PEREZ E, PIKTUS A, et al. Retrieval-augmented generation for knowledge-intensive NLP tasks[J]. Advances in neural information processing systems, 2020, 33: 9459-9474

[10] GAO Yunfan, XIONG Yun, GAO Xinyu, et al. Retrieval-augmented generation for large language models: a survey[EB/OL]. (2023-12-29)[2024-01-02]. http://arxiv.org/abs/2312.10997.

[11] 邹佰翰, 汪莹, 彭鑫, 等. 重新审视代码补全中的检索增强策略[J]. 软件学报, 2025, 36(6): 2747-2773 ZOU Baihan, WANG Ying, PENG Xin, et al. Revisiting retrieval-augmentation strategy in code completion[J]. Journal of software, 2025, 36(6): 2747-2773

[12] 田萱, 吴志超. 基于信息检索的知识库问答综述[J]. 计算机研究与发展, 2025, 62(2): 314-335 TIAN Xuan, WU Zhichao. Review of knowledge base question answering based on information retrieval[J]. Journal of computer research and development, 2025, 62(2): 314-335

[13] PANG R Y, PARRISH A, JOSHI N, et al. QuALITY: question answering with long input texts, yes![C]//Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Seattle: USAACL, 2022.

[14] KARPUKHIN V, OGUZ B, MIN S, et al. Dense passage retrieval for open-domain question answering[C]//Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing. Online: SIGDAT, 2020.

[15] 邸剑, 刘骏华, 曹锦纲. 利用BERT和覆盖率机制改进的HiNT文本检索模型[J]. 智能系统学报, 2024, 19(3): 719-727 DI Jian, LIU Junhua, CAO Jingang. An improved HiNT text retrieval model using BERT and coverage mechanism[J]. CAAI transactions on intelligent systems, 2024, 19(3): 719-727

[16] SARTHI P, ABDULLAH S, TULI A, et al. RAPTOR: recursive abstractive processing for tree-organized retrieval[C]//The Twelfth International Conference on Learning Representations. Vienna: ICLR, 2024.

[17] RAINA V, GALES M. Question-based retrieval using atomic units for enterprise RAG[C]//Proceedings of the Seventh Fact Extraction and VERification Workshop. Miami: ACL, 2024.

[18] G?NTHER M, MOHR I, WILLIAMS D J, et al. Late chunking: contextual chunk embeddings using long-context embedding models[EB/OL]. (2024-10-02) [2024-10-10]. http://arxiv.org/abs/2409.04701.

[19] IZACARD G, CARON M, HOSSEINI L, et al. Unsupervised dense information retrieval with contrastive learning[J]. Transactions on machine learning research, 2022, 2022: 1-6

[20] YE Qinyuan, BELTAGY I, PETERS M, et al. FiD-ICL: a fusion-in-decoder approach for efficient in-context learning[C]//Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics. Toronto: USAACL, 2023.

[21] MA Xinbei, GONG Yeyun, HE Pengcheng, et al. Query rewriting in retrieval-augmented large language models[C]//Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Singapore: USAACL, 2023.

[22] SHAO Zhihong, GONG Yeyun, SHEN Yelong, et al. Enhancing retrieval-augmented large language models with iterative retrieval-generation synergy[C]//Conference on Empirical Methods in Natural Language Processing(Findings). Singapor: ACL, 2023.

[23] WANG Liang, YANG Nan, WEI Furu. Query2doc: query expansion with large language models[C]//Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Singapore: SIGDAT, 2023.

[24] GAO Luyu, MA Xueguang, LIN J, et al. Precise zero-shot dense retrieval without relevance labels[C]//Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics. Toronto: USAACL, 2023.

[25] GLASS M, ROSSIELLO G, CHOWDHURY M F M, et al. Re2G: retrieve, rerank, generate[C]//Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Seattle: USAACL, 2022.

[26] ZHUANG Shengyao, LIU Bing, KOOPMAN B, et al. Open-source large language models are strong zero-shot query likelihood models for document ranking[C]//Conference on Empirical Methods in Natural Language Processing(Findings). Singapore: ACL, 2023.

[27] 余润杰, 阳羽凡, 周健, 等. 面向海量数据的高效流水化检索增强生成系统[J]. 中国科学: 信息科学, 2025, 55(3): 542-558 YU Runjie, YANG Yufan, ZHOU Jian, et al. Efficient pipeline for retrieval-augmented generation system under big data[J]. Scientia sinica informationis, 2025, 55(3): 542-558

[28] 吴文隆, 尹海莲, 王宁, 等. 大语言模型和知识图谱协同的跨域异质数据查询框架[J]. 计算机研究与发展, 2025, 62(3): 605-619 WU Wenlong, YIN Hailian, WANG Ning, et al. A synergetic LLM-KG framework for cross-domain heterogeneous data query[J]. Journal of computer research and development, 2025, 62(3): 605-619

[29] HEALY J, MCINNES L. Uniform manifold approximation and projection[J]. Nature reviews methods primers, 2024, 4: 82

[30] KWON W, LI Zhuohan, ZHUANG Siyuan, et al. Efficient memory management for large language model serving with PagedAttention[C]//Proceedings of the 29th Symposium on Operating Systems Principles. Koblenz: ACM, 2023.

- Similar References:

Memo

-

Last Update:

2026-01-05