[1]LU Jun,ZOU Kangcheng,LI Yang.Feature flow-based point cloud object detection method[J].CAAI Transactions on Intelligent Systems,2026,21(1):146-155.[doi:10.11992/tis.202503005]

Copy

Feature flow-based point cloud object detection method

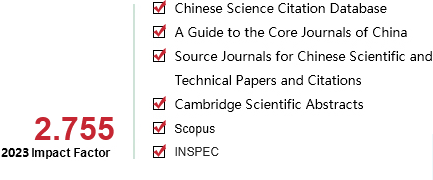

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

146-155

Column:

学术论文―智能系统

Public date:

2026-01-05

- Title:

- Feature flow-based point cloud object detection method

- Keywords:

- lidar point cloud; object detection; feature flow; feature alignment; temporal feature fusion; deformable attention mechanism; bird’s-eye view; multi-frame point cloud fusion

- CLC:

- TP391

- DOI:

- 10.11992/tis.202503005

- Abstract:

- Aiming at the problem of missing scene information and missing target detection caused by the sparsity of point cloud in the existing 3D target detection method of lidar point cloud, this paper proposes a single-stage 3D target detection algorithm based on feature flow, and the algorithm optimizes the detection performance through multi-frame spatio-temporal feature fusion and dynamic alignment mechanism. Firstly, a multi-frame fusion framework driven by gated network is constructed. The deformable attention mechanism is used to cooperate with the spatio-temporal feature extraction module to realize the dynamic alignment of cross-frame features and suppress the false detection caused by unaligned feature fusion. Secondly, a deformable attention mechanism guided by spatio-temporal features is designed to predict feature offset and weight through target motion information, so as to improve the feature matching accuracy of sparse point clouds. Finally, a hierarchical feature flow extraction module is designed to enhance the scene representation ability by combining multi-scale feature extraction and progressive fusion strategy. Experiments show that the proposed algorithm achieves 63.73% mAP on the NuScenes verification set, which is 4.51% higher than the voxel benchmark method, and the detection accuracy of small targets such as motorcycles and bicycles is improved by more than 14%. Ablation experiments show that the multi-frame complementary mechanism increases the recall rate of long-distance targets (>50 m) by 16.2%, and reduces the missed detection rate of occlusion scenes by 11.8%. This study provides an effective solution for three-dimensional detection of sparse point clouds for autonomous driving.

- References:

-

[1] HERRMANN L, KOLLMANNSBERGER S. Deep learning in computational mechanics: a review[J]. Computational mechanics, 2024, 74(2): 281-331

[2] ZHAO Xia, WANG Limin, ZHANG Yufei, et al. A review of convolutional neural networks in computer vision[J]. Artificial intelligence review, 2024, 57(4): 99

[3] KHEDDAR H, HEMIS M, HIMEUR Y. Automatic speech recognition using advanced deep learning approaches: a survey[J]. Information fusion, 2024, 109: 102422

[4] TORFI A, SHIRVANI R A, KENESHLOO Y, et al. Natural language processing advancements by deep learning: a survey[EB/OL]. (2020-03-02)[2025-03-04]. https://arxiv.org/abs/2003.01200.

[5] U?INSKIS V, MAKULAVI?IUS M, PETKEVI?IUS S, et al. Towards autonomous driving: technologies and data for vehicles-to-everything communication[J]. Sensors, 2024, 24(11): 3411

[6] 徐向阳, 胡文浩, 董红磊, 等. 自动驾驶汽车测试场景构建关键技术综述[J]. 汽车工程, 2021, 43(4): 610-619 XU Xiangyang, HU Wenhao, DONG Honglei, et al. Review of key technology for autonomous vehicle test scenario construction[J]. Automotive engineering, 2021, 43(4): 610-619

[7] FAN Lili, WANG Junhao, CHANG Yuanmeng, et al. 4D mmWave radar for autonomous driving perception: a comprehensive survey[J]. IEEE transactions on intelligent vehicles, 2024, 9(4): 4606-4620

[8] LEI Han, WANG Baoming, SHUI Zuwei, et al. Automated lane change behavior prediction and environmental perception based on SLAM technology[EB/OL]. (2024-04-06)[2025-03-04]. https://arxiv.org/abs/2404.04492.

[9] XIE Jing, ABBASS K, LI Di. Advancing eco-excellence: Integrating stakeholders’ pressures, environmental awareness, and ethics for green innovation and performance[J]. Journal of environmental management, 2024, 352: 120027

[10] LI Ying, MA Lingfei, ZHONG Zilong, et al. Deep learning for LiDAR point clouds in autonomous driving: a review[J]. IEEE transactions on neural networks and learning systems, 2021, 32(8): 3412-3432

[11] 李佳男, 王泽, 许廷发. 基于点云数据的三维目标检测技术研究进展[J]. 光学学报, 2023, 43(15): 1515001 LI Jianan, WANG Ze, XU Tingfa. Three-dimensional object detection technology based on point cloud data[J]. Acta optica sinica, 2023, 43(15): 1515001

[12] JHALDIYAL A, CHAUDHARY N. Semantic segmentation of 3D LiDAR data using deep learning: a review of projection-based methods[J]. Applied intelligence, 2023, 53(6): 6844-6855

[13] POUX F, BILLEN R, POUX F, et al. Voxel-based 3D point cloud semantic segmentation: unsupervised geometric and relationship featuring vs deep learning methods[J]. ISPRS international journal of geo-information, 2019, 8(5): 213.

[14] XU Xiaobin, ZHANG Lei, YANG Jian, et al. Object detection based on fusion of sparse point cloud and image information[J]. IEEE transactions on instrumentation and measurement, 2021, 70: 2512412

[15] LIU Ruihua, NAN Haoyu, ZOU Yangyang, et al. AS-3DFCN: automatically seeking 3DFCN-based brain tumor segmentation[J]. Cognitive computation, 2023, 15(6): 2034-2049

[16] WANG Jianfeng, SONG Lin, LI Zeming, et al. End-to-end object detection with fully convolutional network[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Virtual: IEEE, 2021: 15844-15853.

[17] NGUYEN D A, HOANG K N, NGUYEN N T, et al. Enhancing indoor robot pedestrian detection using improved PIXOR backbone and Gaussian heatmap regression in 3D LiDAR point clouds[J]. IEEE access, 2024, 12: 9162-9176

[18] XIE Enze, YU Zhiding, ZHOU Daquan, et al. M^2BEV: multi-camera joint 3D detection and segmentation with unified birds-eye view representation[EB/OL]. (2022-04-11)[2025-03-04]. https://arxiv.org/abs/2204.05088.

[19] CHEN Yukang, LIU Jianhui, ZHANG Xiangyu, et al. VoxelNeXt: fully sparse VoxelNet for 3D object detection and tracking[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2023: 21674-21683.

[20] Vision and pattern Recognition. 2023: 21674-21683.

[21] SHI Shaoshuai, GUO Chaoxu, JIANG Li, et al. PV-RCNN: point-voxel feature set abstraction for 3D object detection[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2020: 10526-10535.

[22] CHARLES R Q, HAO Su, MO Kaichun, et al. PointNet: deep learning on point sets for 3D classification and segmentation[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2017: 77-85.

[23] QI C R, YI Li, SU Hao, et al. PointNet++: deep hierarchical feature learning on point sets in a metric space[EB/OL]. (2017-06-07)[2025-03-04]. https://arxiv.org/abs/1706.02413.

[24] SHI Shaoshuai, WANG Xiaogang, LI Hongsheng. PointRCNN: 3D object proposal generation and detection from point cloud[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2020: 770-779.

[25] QIAN Guocheng, LI Yuchen, PENG Houwen, et al. PointNeXt: revisiting PointNet++ with improved training and scaling strategies[EB/OL]. (2022-06-09)[2025-03-04]. https://arxiv.org/abs/2206.04670.

[26] YANG Zetong, SUN Yanan, LIU Shu, et al. 3DSSD: point-based 3D single stage object detector[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2020: 11037-11045.

[27] YIN Tianwei, ZHOU Xingyi, KRHENBUHL P. Center-based 3D Object Detection and Tracking[EB/OL]. (2020-06-19)[2025-03-04]. https://arxiv.org/abs/2006.11275.

[28] ABBAS W, SHABBIR M, LI Jiani, et al. Resilient distributed vector consensus using centerpoint[J]. Automatica, 2022, 136: 110046

[29] HU Yaoqi, NIU Axi, SUN Jinqiu, et al. Dynamic center point learning for multiple object tracking under Severe occlusions[J]. Knowledge-based systems, 2024, 300: 112130

[30] WANG Hai, TAO Le, CAI Yingfeng, et al. CenterPoint-SE: a single-stage anchor-free 3-D object detection algorithm with spatial awareness enhancement[J]. IEEE transactions on intelligent transportation systems, 2023, 24(10): 10760-10773

[31] 刘小波, 肖肖, 王凌, 等. 基于无锚框的目标检测方法及其在复杂场景下的应用进展[J]. 自动化学报, 2023, 49(7): 1369-1392 LIU Xiaobo, XIAO Xiao, WANG Ling, et al. Anchor-free based object detection methods and its application progress in complex scenes[J]. Acta automatica sinica, 2023, 49(7): 1369-1392

[32] CAESAR H, BANKITI V, LANG A H, et al. nuScenes: a multimodal dataset for autonomous driving[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2020: 11618-11628.

[33] BAI Xuyang, HU Zeyu, ZHU Xinge, et al. TransFusion: robust LiDAR-camera fusion for 3D object detection with transformers[C]//2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2022: 1080-1089.

[34] WU Hai, WEN Chenglu, SHI Shaoshuai, et al. Virtual sparse convolution for multimodal 3D object detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2023: 21653-21662.

- Similar References:

Memo

-

Last Update:

2026-01-05