[1]LIU Wanjun,ZHAO Siqi,QU Haicheng,et al.Combining foreground feature reinforcement and region mask self-attention for fine-grained image classification[J].CAAI Transactions on Intelligent Systems,2022,17(6):1134-1144.[doi:10.11992/tis.202109029]

Copy

Combining foreground feature reinforcement and region mask self-attention for fine-grained image classification

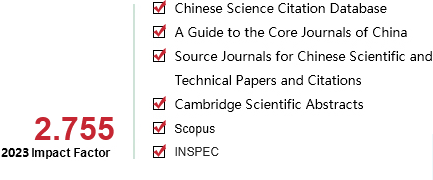

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

17

Number of periods:

2022 6

Page number:

1134-1144

Column:

学术论文—机器感知与模式识别

Public date:

2022-11-05

- Title:

- Combining foreground feature reinforcement and region mask self-attention for fine-grained image classification

- Keywords:

- fine-grained image classification; object localization; region-based mask; self-attention; diverse feature; feature reinforcement; residual network; deep learning

- CLC:

- TP391.4

- DOI:

- 10.11992/tis.202109029

- Abstract:

- This study presents a method of foreground feature reinforcement and region mask self-attention for fine-grained image classification due to the difficulty in extracting subtle features of subordinate classes that are difficult to distinguish irrelevant background noise interference. The ResNet50 is used first to extract global features of the input image, followed by the foreground feature reinforcement, which predicts the position coordinates of the foreground object in the input image. While eliminating background information interference, the features of foreground objects are enhanced to effectively highlight foreground objects. Finally, the region mask self-attention network is used to teach feature-enhanced foreground objects with rich and diverse fine-grained information that is different from other subclasses. The multi-branch loss function constrains the network’s feature learning throughout the process. The comprehensive experiments show that our approach outperforms other mainstream methods on CUB-200-2011, Stanford Cars datasets, and FGVC-Aircraft, with 88.0%, 95.3%, and 93.6%, respectively.

- References:

-

[1] ZHANG Fan, LI Meng, ZHAI Guisheng, et al. Multi-branch and multi-scale attention learning for fine-grained visual categorization[M]. MultiMedia Modeling. Cham: Springer International Publishing, 2021: 136-147.

[2] WAH C, BRANSON S, WELINDER P, et al. The Caltech-UCSD Birds-200-2011 Dataset[EB/OL]. (2011-04-12)[2021-09-15]. http://www.vision.caltech.edu/datasets/CUB_200_2011.

[3] MAJI S, RAHTU E, KANNALA J, et al. Fine-grained visual classification of aircraft[EB/OL]. (2013-06-21)[2021-09-15].https://arxiv.org/abs/1306.5151.

[4] ZHANG N, DONAHUE J, GIRSHICK R, et al. Part-based R-CNNs for fine-grained category detection[M]. Computer Vision-ECCV 2018. Cham: Springer International Publishing, 2014: 834-849.

[5] UIJLINGS J R R. VAN DE SANDE K E A, GEVERS T, et al. Selective search for object recognition[J]. International journal of computer vision, 2013, 104(2): 154–171.

[6] GIRSHICK R, DONAHUE J, DARRELL T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//2014 IEEE Conference on Computer Vision and Pattern Recognition. Columbus: IEEE, 2014 : 580-587.

[7] BRANSON S, VAN HORN G, BELONGIE S, et al. Bird species categorization using pose normalized deep convolutional nets[EB/OL]. (2014-06-11)[2021-09-15].https://arxiv.org/abs/1406.2952.

[8] 罗建豪, 吴建鑫. 基于深度卷积特征的细粒度图像分类研究综述[J]. 自动化学报, 2017, 43(8): 1306–1318

LUO Jianhao, WU Jianxin. A survey on fine-grained image categorization using deep convolutional features[J]. Acta automatica sinica, 2017, 43(8): 1306–1318

[9] 陈立潮, 朝昕, 潘理虎, 等. 基于部件关注DenseNet的细粒度车型识别[J]. 智能系统学报, 2022, 17(2): 402–410

CHEN Lichao, CHAO Xin, PAN Lihu, et al. Fine-grained vehicle type identification based on part-focused DenseNet[J]. CAAI transactions on intelligent systems, 2022, 17(2): 402–410

[10] LIN T Y, ROYCHOWDHURY A, MAJI S. Bilinear CNN models for fine-grained visual recognition[C]//2015 IEEE International Conference on Computer Vision (ICCV). Santiago: IEEE, 2015: 1449-1457.

[11] FU Jianlong, ZHENG Heliang, MEI Tao. Look closer to see better: recurrent attention convolutional neural network for fine-grained image recognition[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu: IEEE, 2017 : 4476-4484.

[12] ZHENG Heliang, FU Jianlong, MEI Tao, et al. Learning multi-attention convolutional neural network for fine-grained image recognition[C]//2017 IEEE International Conference on Computer Vision. Venice: IEEE, 2017: 5219-5227.

[13] ZHOU Bolei, KHOSLA A, LAPEDRIZA A, et al. Learning deep features for discriminative localization[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas: IEEE, 2016 : 2921-2929.

[14] WEI Jun, WANG Qin, LI Zhen, et al. Shallow feature matters for weakly supervised object localization[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Nashville: IEEE, 2021 : 5989-5997.

[15] SOHN J, JEON E, JUNG W, et al. Fine-grained attention for weakly supervised object localization[EB/OL]. (2021-04-11)[2021-09-15].https://arxiv.org/abs/2104.04952.

[16] PAN Xingjia, GAO Yingguo, LIN Zhiwen, et al. Unveiling the potential of structure preserving for weakly supervised object localization[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Nashville: IEEE, 2021: 11637-11646.

[17] ZHANG Xiaolin, WEI Yunchao, FENG Jiashi, et al. Adversarial complementary learning for weakly supervised object localization[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 2018: 1325-1334.

[18] WEI Xiushen, LUO Jianhao, WU Jianxin, et al. Selective convolutional descriptor aggregation for fine-grained image retrieval[J]. IEEE transactions on image processing, 2017, 26(6): 2868–2881.

[19] QIAO Liang, CHEN Ying, CHENG Zhanzhan, et al. MANGO: a mask attention guided one-stage scene text spotter[J]. Proceedings of the AAAI conference on artificial intelligence, 2021, 35(3): 2467–2476.

[20] WANG Jun, YU Xiaohan, GAO Yongsheng. Mask guided attention for fine-grained patchy image classification[C]//2021 IEEE International Conference on Image Processing. Anchorage: IEEE, 2021 : 1044-1048.

[21] SUN GUOLEI, CHOLAKKAL H, KHAN S, et al. Fine-grained recognition: accounting for subtle differences between similar classes[J]. Proceedings of the AAAI conference on artificial intelligence, 2020, 34(07): 12047–12054.

[22] LI J L, DAI H, SHAO L, et al. Anchor-free 3D Single Stage Detector with Mask-Guided Attention for Point Cloud[M]. New York, NY, USA: Association for Computing Machinery, 2021: 553-562.

[23] XIE Jin, PANG Yanwei, KHAN M H, et al. Mask-guided attention network and occlusion-sensitive hard example mining for occluded pedestrian detection[J]. IEEE transactions on image processing:a publication of the IEEE signal processing society, 2021, 30: 3872–3884.

[24] CHOE J, LEE S, SHIM H. Attention-based dropout layer for weakly supervised single object localization and semantic segmentation[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach: IEEE, 2019: 2214-2223.

[25] KRAUSE J, STARK M, JIA Deng, et al. 3D object representations for fine-grained categorization[C]//2013 IEEE International Conference on Computer Vision Workshops. Sydney: IEEE, 2013: 554-561.

[26] TAN Min, WANG Guijun, ZHOU Jian, et al. Fine-grained classification via hierarchical bilinear pooling with aggregated slack mask[J]. IEEE access, 2019, 7: 117944–117953.

[27] WEI Xiu Sen, WU Jian Xin, CUI Quan. Deep Learning for Fine-Grained Image Analysis: A Survey[EB/OL]. (2019-07-06)[2021-09-15].https://arxiv.org/abs/1907.03069.

[28] LUO Wei, YANG Xitong, MO Xianjie, et al. Cross-X learning for fine-grained visual categorization[C]//2019 IEEE/CVF International Conference on Computer Vision (ICCV). Seoul: IEEE, 2019: 8241-8250.

[29] CHEN Yue, BAI Yalong, ZHANG Wei, et al. Destruction and construction learning for fine-grained image recognition[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach: IEEE, 2019: 5152-5161.

[30] HANSELMANN H, NEY H. ELoPE: fine-grained visual classification with efficient localization, pooling and embedding[C]//2020 IEEE Winter Conference on Applications of Computer Vision. Snowmass: IEEE, 2020: 1236-1245.

[31] LI Ming, LEI Lin, SUN Hao, et al. Fine-grained visual classification via multilayer bilinear pooling with object localization[J]. The visual computer, 2022, 38(3): 811–820.

[32] YANG Ze, LUO Tiange, WANG Dong, et al. Learning to Navigate for Fine-Grained Classification[C]//European Conference on Computer Vision. Cham: Springer, 2018: 438-454.

[33] SHI Xiruo, XU Liutong, WANG Pengfei, et al. Beyond the attention: distinguish the discriminative and confusable features for fine-grained image classification[C]//MM ’20: Proceedings of the 28th ACM International Conference on Multimedia. New York: ACM, 2020: 601-609.

- Similar References:

Memo

-

Last Update:

1900-01-01