[1]LI Xiuquan.Main features and development trend in current artificial intelligence technology innovation[J].CAAI Transactions on Intelligent Systems,2020,15(2):409-412.[doi:10.11992/tis.202001030]

Copy

Main features and development trend in current artificial intelligence technology innovation

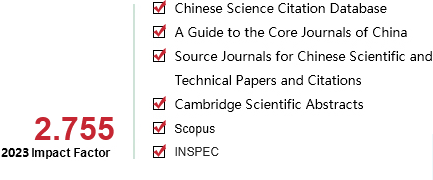

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

15

Number of periods:

2020 2

Page number:

409-412

Column:

洞见与碰撞

Public date:

2020-03-05

- Title:

- Main features and development trend in current artificial intelligence technology innovation

- Keywords:

- Artificial intelligence; technical form; innovation feature; development trend; software-hardware collaboration; technology fusion; lightweight model

- CLC:

- TP18

- DOI:

- 10.11992/tis.202001030

- Abstract:

- In recent years, the development of global artificial intelligence (AI) has entered a new round of active period, with the rapid iteration of new theories, models, and algorithms. This study analyzes the main features of the current AI technology innovation from the perspective of model algorithms, software and hardware implementations, and intelligent system forms. It summarizes some of the innovation hotspots in the domestic as well as international frontier research of AI. Furthermore, in terms of the breakthroughs in basic theory, innovation of underlying computing models, and evolution of model algorithms, several possible trends in the future development of AI technology are discussed.

- References:

-

[1] KIM B, WATTENBERG M, GILMER J, et al. Interpretability beyond feature attribution: quantitative Testing with Concept Activation Vectors (TCAV)[C]//Proceedings of the 35th International Conference on Machine Learning 2018. Stockholm, Sweden, 2018: 2673-2682.

[2] BATTAGLIA P W, HAMRICK J B, BAPST V, et al. Relational inductive biases, deep learning, and graph networks[EB/OL]. https://arxiv.org/abs/1806.0126.

[3] KIM Y. Convolutional neural networks for sentence classification[C]//Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP). Doha, Qatar, 2014: 1746-1751.

[4] PETERS M E, NEUMANN M, IYYER M, et al. Deep contextualized word representations[C]//Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL). Louisiana, USA, 2018.

[5] DEVLIN J, CHANG Mingwei, LEE K, et al. BERT: Pre-training of deep bidirectional transformers for language understanding[C]//Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL). Minneapolis, MN, USA, 2019.

[6] KRIZHEVSKY A, SUTSKEVER I, HINTON G. ImageNet classification with deep convolutional neural networks[C]//Proceedings of the 26th Annual Conference on Neural Information Processing Systems (NIPS). Lake Tahoe, USA, 2012: 1097-1105.

[7] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[EB/OL]. https://arxiv.org/abs/1512.03385.

[8] STRUBELL E, GANESH A, MCCALLUM A. Energy and policy considerations for deep learning in NLP[C]//Proceedings of the 57th Conference of the Association for Computational Linguistics. Florence, Italy, 2019.

[9] ABADI M, AGARWAL A, BARHAM P, et al. Tensorflow: Large-scale machine learning on heterogeneous distributed systems[EB/OL]. https://arxiv.org/abs/1603.04467v1.

[10] PASZKE A, GROSS S, MASSA F, et al. PyTorch: An imperative style, high-performance deep learning library[EB/OL]. https://arxiv.org/abs/1912.01703?context=cs.LG.

[11] JOUPPI N P, YOUNG C, PATIL N, et al. In-datacenter performance analysis of a tensor processing unit[C]//Proceedings of the 44th Annual International Symposium on Computer Architecture (ISCA). Toronto, Canada, 2017: 1-12.

[12] NVIDIA. GPU-based deep learning inference: a performance and power analysis[EB/OL]. California: NVIDIA, 2015. (2015-11)[2020-03-25]. https://www.nvidia.com/content/tegra/embedded-systems/pdf/jetson_tx1_whitepaper.pdf.

[13] SILVER D, HUANG A, MADDISON C J, et al. Mastering the game of Go with deep neural networks and tree search[J]. Nature, 2016, 529(7587): 484-489.

[14] ULLMAN S. Using neuroscience to develop artificial intelligence[J]. Science, 2019, 363(6428): 692-693.

[15] ROY K, JAISWAL A, PANDA P. Towards spike-based machine intelligence with neuromorphic computing[J]. Nature, 2019, 575(7784): 607-617.

[16] PEI Jing, DENG Lei, SONG Sen, et al. Towards artificial general intelligence with hybrid Tianjic chip architecture[J]. Nature, 2019, 572(7767): 106-111.

[17] YING Mingsheng. Quantum computation, quantum theory and AI[J]. Artificial intelligence, 2010, 174(2): 162-176.

- Similar References:

Memo

-

Last Update:

1900-01-01