[1]XU Peng,XIE Guangming,WEN Jiayan,et al.Event-triggered reinforcement learning formation control for multi-agent[J].CAAI Transactions on Intelligent Systems,2019,14(1):93-98.[doi:10.11992/tis.201807010]

Copy

Event-triggered reinforcement learning formation control for multi-agent

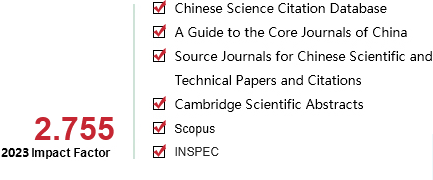

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

14

Number of periods:

2019 1

Page number:

93-98

Column:

学术论文―机器学习

Public date:

2019-01-05

- Title:

- Event-triggered reinforcement learning formation control for multi-agent

- Keywords:

- reinforcement learning; multi-agent; event-triggered; formation control; Markov decision processes; swarm intelligence; action-decisions; particle swarm optimization

- CLC:

- TP391.8

- DOI:

- 10.11992/tis.201807010

- Abstract:

- A large consumption of communication and computing capabilities has been reported in classical reinforcement learning of multi-agent formation. This paper introduces an event-triggered mechanism so that the multi-agent’s decisions do not need to be carried out periodically; instead, the multi-agent’s actions are replaced depending on the event-triggered condition. Both the sum of total reward and variance in current rewards are considered when designing an event-triggered condition, so a joint optimization strategy is obtained by exchanging information among multiple agents. Numerical simulation results demonstrate that the multi-agent formation control algorithm can effectively reduce the frequency of a multi-agent’s action decisions and consumption of resources while ensuring system performance.

- References:

-

[1] POLYDOROS A S, NALPANTIDIS L. Survey of model-based reinforcement learning:applications on robotics[J]. Journal of intelligent & robotic systems, 2017, 86(2):153-173.

[2] TSAURO G, TOURCTZKY D S, LN T K, et al. Advances in neural information processing systems[J]. Biochemical and biophysical research communications, 1997, 159(6).

[3] 梁爽, 曹其新, 王雯珊, 等. 基于强化学习的多定位组件自动选择方法[J]. 智能系统学报, 2016, 11(2):149-154 LIANG Shuang, CAO Qixin, WANG Wenshan, et al. An automatic switching method for multiple location components based on reinforcement learning[J]. CAAI transactions on intelligent systems, 2016, 11(2):149-154

[4] KIM H E, AHN H S. Convergence of multiagent Q-learning:multi action replay process approach[C]//Proceedings of 2010 IEEE International Symposium on Intelligent Control. Yokohama, Japan, 2010:789-794.

[5] ⅡMA H, KUROE Y. Swarm reinforcement learning methods improving certainty of learning for a multi-robot formation problem[C]//Proceedings of 2015 IEEE Congress on Evolutionary Computation. Sendai, Japan, 2015:3026-3033.

[6] MENG Xiangyu, CHEN Tongwen. Optimal sampling and performance comparison of periodic and event based impulse control[J]. IEEE transactions on automatic control, 2012, 57(12):3252-3259.

[7] DIMAROGONAS D V, FRAZZOLI E, JOHANSSON K H. Distributed event-triggered control for multi-agent systems[J]. IEEE transactions on automatic control, 2012, 57(5):1291-1297.

[8] XIE Duosi, XU Shengyuan, CHU Yuming, et al. Event-triggered average consensus for multi-agent systems with nonlinear dynamics and switching topology[J]. Journal of the franklin institute, 2015, 352(3):1080-1098.

[9] WU Yuanqing, MENG Xiangyu, XIE Lihua, et al. An input-based triggering approach to leader-following problems[J]. Automatica, 2017, 75:221-228.

[10] TABUADA P. Event-triggered real-time scheduling of stabilizing control tasks[J]. IEEE transactions on automatic control, 2007, 52(9):1680-1685.

[11] WEN Jiayan, WANG Chen, XIE Guangming. Asynchronous distributed event-triggered circle formation of multi-agent systems[J]. Neurocomputing, 2018, 295:118-126.

[12] MENG Xiangyu, CHEN Tongwen. Event based agreement protocols for multi-agent networks[J]. Automatica, 2013, 49(7):2125-2132.

[13] ZHONG Xiangnan, NI Zhen, HE Haibo, et al. Event-triggered reinforcement learning approach for unknown nonlinear continuous-time system[C]//Proceedings of 2014 International Joint Conference on Neural Networks. Beijing, China, 2014:3677-3684.

[14] 张文旭, 马磊, 王晓东. 基于事件驱动的多智能体强化学习研究[J]. 智能系统学报, 2017, 12(1):82-87 ZHANG Wenxu, MA Lei, WANG Xiaodong. Reinforcement learning for event-triggered multi-agent systems[J]. CAAI transactions on intelligent systems, 2017, 12(1):82-87

[15] KR?SE B J A. Learning from delayed rewards[J]. Robotics and autonomous systems, 1995, 15(4):233-235.

- Similar References:

Memo

-

Last Update:

1900-01-01