[1]REN Qingji,CAI Zhijie.A method for enhancing Tibetan text data based on adjective knowledge base[J].CAAI Transactions on Intelligent Systems,2026,21(2):519-528.[doi:10.11992/tis.202503033]

Copy

A method for enhancing Tibetan text data based on adjective knowledge base

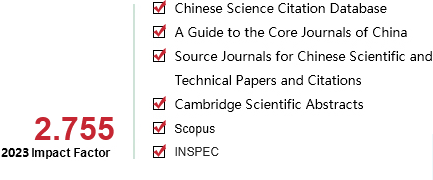

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 2

Page number:

519-528

Column:

学术论文—人工智能基础

Public date:

2026-03-05

- Title:

- A method for enhancing Tibetan text data based on adjective knowledge base

- Keywords:

- natural language processing; low-resource languages; tibetan language; adjectives; knowledge base; data augmentation; modified object; sentence structure

- CLC:

- TP391

- DOI:

- 10.11992/tis.202503033

- Abstract:

- In the field of natural language processing based on deep learning, the quality and scale of datasets directly impact model performance. Data augmentation is an essential technique in natural language processing, serving as an effective means to expand and enrich datasets. This paper addresses the issue of Tibetan data resource scarcity by analyzing the semantic, emotional, and modifying object features of Tibetan adjectives based on actual corpora. Tibetan adjectives are categorized into five main categories—descriptive properties, states, quantities, sensations, and feelings—which include a total of forty-six subcategories. By extracting the features of Tibetan adjectives and their modifying objects, a knowledge base for Tibetan adjectives and a synonym table for modifying objects were constructed. We propose a data augmentation method based on this knowledge base, which replaces adjectives by matching their types and syllable counts, while also substituting modifying objects with corresponding synonyms based on their syntactic structures. Experimental results indicate that this method can significantly increase the volume of Tibetan text data, achieving a total growth rate of 990.22% on sentence sets derived from Tibetan language textbooks for grades one through six.It also shows strong performance in downstream tasks. When RoBERTa, TiBERT, TBERT, and CINO are used as the pre-trained models, the correlation coefficient of the SimCSE model increases by 8.78, 3.17, 0.61, and 1.33 percentage points, respectively. In the text classification task, accuracy, recall, and F1 score are improved by 5.97, 9.51, and 9.31 percentage points, respectively.

- References:

-

[1] SHORTEN C, KHOSHGOFTAAR T M, FURHT B. Text data augmentation for deep learning[J]. Journal of big data, 2021, 8(1): 101

[2] 江荻. 藏语形容词的音节数形态与形态类型[J]. 中国语言学报, 2020(00): 1-27 JIANG Di. Syllable number morphology and morphological types of Tibetan adjectives[J]. Journal of Chinese linguistics, 2020(00): 1-27

[3] LITAKE O, YAGNIK N, LABHSETWAR S. IndiText boost: text augmentation for low resource India languages[EB/OL]. (2024-01-23) [2025-03-24]. https://arxiv.org/abs/2401.13085.

[4] 张虎, 张颖, 杨陟卓, 等. 基于数据增强的高考阅读理解自动答题研究[J]. 中文信息学报, 2021, 35(9): 132-140 ZHANG Hu, ZHANG Ying, YANG Zhizhuo, et al. Data augmentation based automatic answering of reading comprehension in college entrance examination[J]. Journal of Chinese information processing, 2021, 35(9): 132-140

[5] 葛轶洲, 许翔, 杨锁荣, 等. 序列数据的数据增强方法综述[J]. 计算机科学与探索, 2021, 15(7): 1207-1219 GE Yizhou, XU Xiang, YANG Suorong, et al. Survey on Sequence Data Augmentation[J]. Journal of frontiers of computer science & technology, 2021, 15(7): 1207-1219

[6] GHOSH S, TYAGI U, SURI M, et al. ACLM: a selective-denoising based generative data augmentation approach for low-resource complex NER[C]//Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Toronto: Association for Computational Linguistics, 2023: 104-125.

[7] YAN Ge, LI Yu, ZHANG Shu, et al. Data augmentation for deep learning of judgment documents[C]//Intelligence Science and Big Data Engineering. Big Data and Machine Learning. Cham: Springer International Publishing, 2019: 232-242.

[8] 王可超, 郭军军, 张亚飞, 等. 基于回译和比例抽取孪生网络筛选的汉越平行语料扩充方法[J]. 计算机工程与科学, 2022, 44(10): 1861-1868 WANG Kechao, GUO Junjun, ZHANG Yafei, et al. A Chinese-Vietnamese parallel corpus expansion method based on back translation and proportional extraction Siamese network screening[J]. Computer engineering and science, 2022, 44(10): 1861-1868

[9] ZHANG Jinyi, TIAN Ye, MAO Jiannan, et al. WCC-JC: a web-crawled corpus for Japanese-Chinese neural machine translation[J]. Applied sciences, 2022, 12(12): 6002

[10] HOANG V C D, KOEHN P, HAFFARI G, et al. Iterative back-translation for neural machine translation[C]//Proceedings of the 2nd Workshop on Neural Machine Translation and Generation. Melbourne: Association for Computational Linguistics, 2018: 18-24.

[11] 祁瑞艳, 李龙杰, 徐世琤, 等. 基于跨度与类别增强的中文新闻命名实体识别[J]. 智能科学与技术学报, 2024, 6(4): 495-508 QI Ruiyan, LI Longjie, XU Shicheng, et al. Named entity recognition based on span and category enhancement for Chinese news[J]. Chinese journal of intelligent science and technology, 2024, 6(4): 495-508

[12] ZHOU Chunting, MA Xuezhe, HU Junjie, et al. Handling syntactic divergence in low-resource machine translation[EB/OL]. (2019-08-30)[2025-03-24]. https://arxiv.org/abs/1909.00040.

[13] 廖俊伟. 深度学习大模型时代的自然语言生成技术研究[D]. 成都: 电子科技大学, 2023. LIAO Junwei. Research on natural language generation techniques in the large language model era of deep learning[D]. Chengdu: University of Electronic Science and Technology of China, 2023.

[14] ZHANG Xiang, ZHAO Junbo, LECUN Y. Character-level convolutional networks for text classification[J]. Advances in neural information processing systems, 2015: 649-657.

[15] WEI J, ZOU Kai. EDA: easy data augmentation techniques for boosting performance on text classification tasks[C]//Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing. Hong Kong: Association for Computational Linguistics, 2019: 6382-6388.

[16] COULOMBE C. Text data augmentation made simple by leveraging NLP cloud APIs[EB/OL]. (2018-12-05)[2025-03-24]. https://arxiv.org/abs/1812.04718.

[17] FADAEE M, BISAZZA A, MONZ C. Data augmentation for low-resource neural machine translation[C]//Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers). Vancouver: Association for Computational Linguistics, 2017: 567-573.

[18] 张蓉, 刘渊. 适用于方面级情感分析的多级数据增强方法[J]. 数据与计算发展前沿, 2023, 5(5): 140-153 ZHANG Rong, LIU Yuan. Multi-level data augmentation method for aspect-based sentiment analysis[J]. Frontiers of data & computing, 2023, 5(5): 140-153

[19] 尤丛丛, 高盛祥, 余正涛, 等. 基于同义词数据增强的汉越神经机器翻译方法[J]. 计算机工程与科学, 2021, 43(8): 1497-1502 YOU Congcong, GAO Shengxiang, YU Zhengtao, et al. A Chinese-Vietnamese neural machine translation method based on synonym data augmentation[J]. Computer engineering and science, 2021, 43(8): 1497-1502

[20] 汪超. 基于数据增强技术的藏汉机器翻译方法研究[D]. 拉萨: 西藏大学, 2023. WANG Chao. A study on Tibetan-Chinese machine translation method based on data enhancement technology[D]. Lasa: Xizang University, 2023.

[21] 色差甲, 班马宝, 才让加, 等. 结合数据增强方法的藏文预训练语言模型[J]. 中文信息学报, 2024, 38(9): 66-72 SE Chajia, BAN Mabao, CAI Rangjia, et al. Tibetan pre-training language model combined with data enhancement method[J]. Journal of Chinese information processing, 2024, 38(9): 66-72

[22] 马进武. 藏语语法四种结构明晰[M]. 北京: 民族出版社, 2008.

[23] 吉太加. 现代藏语语法通论[M]. 西宁: 青海民族出版社, 2022.

[24] 马拉毛草. 基于语料库的藏语形容词功能属性研究[D]. 兰州: 西北民族大学, 2013. MA Lamaocao. Corpus of Tibetan words describe attributes based on function[D]. Lanzhou: Northwest University for Nationalities, 2013.

[25] 周毛太. 藏语形容词的功能分类及其情感研究[D]. 兰州: 西北民族大学, 2020. ZHOU Maotai. The research on the classification of Tibetan adjectives and it’s emotion[D]. Lanzhou: Northwest University for Nationalities, 2020.

[26] QUN Nuo, LI Xing, QIU Xipeng, et al. End-to-end neural text classification for Tibetan[C]//Chinese Computational Linguistics and Natural Language Processing Based on Naturally Annotated Big Data. Cham: Springer International Publishing, 2017: 472-480.

[27] CER D, DIAB M, AGIRRE E, et al. SemEval-2017 task 1: semantic textual similarity multilingual and Crosslingual focused evaluation[C]//Proceedings of the 11th International Workshop on Semantic Evaluation(SemEval-2017). Vancouver: ACL, 2017: 1-14.

[28] GAO Tianyu, YAO Xingcheng, CHEN Danqi. SimCSE: simple contrastive learning of sentence embeddings[C]//Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Punta Cana: Association for Computational Linguistics, 2021: 6894-6910.

[29] SANGJEE D. Sangjeedondrub/tibetan-roberta-basehuggin-gace[EB/OL]. (2024-06-25) [2025-03-24]. https://huggingface.co/sangjeedondrub/Tibetan-roberta-base.

[30] 青海师范大学省部共建藏语智能信息处理及应用国家重点实验室和兰州大学开源软件与实时系统教育部工程研究中心. 藏文预训练语言模型TBERT github[EB/OL]. (2023-10-08)[2025-03-24]. https://github.com/Dslab-NLP/Tibetan-PLM.

[31] LIU Sisi, DENG Junjie, SUN Yuan, et al. TiBERT: Tibetan pre-trained language model[C]//2022 IEEE International Conference on Systems, Man, and Cybernetics. Prague: IEEE, 2022: 2956-2961.

[32] YANG Ziqing, XU Zihang, CUI Yiming, et al. CINO: a Chinese minority pre-trained language model[C]//Proceedings of the 29th International Conference on Computational Linguistics. Gyeongju: COLING, 2022: 3937–3949.

[33] 林荣华. 基于卷积神经网络的句子分类算法[D]. 杭州: 浙江大学, 2015. LIN Ronghua. Convolutional neural network based sentence classification algorithm. Hangzhou: Zhejiang University, 2015.

- Similar References:

Memo

-

Last Update:

1900-01-01