[1]ZENG Biqing,HAN Xuli,WANG Shengyu,et al.Hierarchical double-attention neural networks for sentiment classification[J].CAAI Transactions on Intelligent Systems,2020,15(3):460-467.[doi:10.11992/tis.201812017]

Copy

Hierarchical double-attention neural networks for sentiment classification

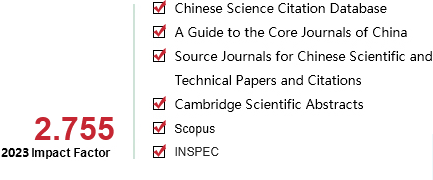

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

15

Number of periods:

2020 3

Page number:

460-467

Column:

学术论文―自然语言处理与理解

Public date:

2020-05-05

- Title:

- Hierarchical double-attention neural networks for sentiment classification

- Keywords:

- sentiment analysis; attention mechanism; convolutional neural network (CNN); sentiment classification; recurrent neural network (RNN); word vector; deep learning; feature selection

- CLC:

- TP391

- DOI:

- 10.11992/tis.201812017

- Abstract:

- In sentiment classification, feature extraction in the document level is more difficult than the analysis in the common sentence level because of the length of the text. Most methods apply a hierarchical model to the sentiment analysis of text in the document level. However, most existing hierarchical methods mainly focus on a recurrent neural network (RNN) and attention mechanism, and the feature extracted by a single RNN is unclear. To solve the sentiment classification problem in the document level, we propose a hierarchical double-attention neural network model. In the first step, we improve a convolutional neural network (CNN), construct a word attention CNN, and then extract the features of the chapter from two levels. In the first level, the attention CNN can identify important words and phrases from every sentence, extract the word feature of the sentence, and construct the feature vector of the sentence. In the second level, the semantic meaning of the document is derived by the RNN. The global attention mechanism can find the importance of every sentence in the document, attribute different weights to them, and construct the whole semantic representation of the document. The experiment results on IMDB, YELP 2013, and YELP 2014 datasets show that our model achieves a more significant improvement than all state-of-the-art methods.

- References:

-

[1] PANG Bo, LEE L, VAITHYANATHAN S. Thumbs up?: sentiment classification using machine learning techniques[C]//Proceedings of the ACL-02 Conference on Empirical Methods in Natural Language Processing-Volume 10. Stroudsburg, USA, 2002: 79-86.

[2] LU Yue, CASTELLANOS M, DAYAL U, et al. Automatic construction of a context-aware sentiment lexicon: an optimization approach[C]//Proceedings of the 20th International Conference on World Wide Web. Hyderabad, India, 2011: 347-356.

[3] WANG Sida, MANNING C D. Baselines and bigrams: simple, good sentiment and topic classification[C]//Proceedings of the 50th Annual Meeting of the Association for Computational Linguistics: Short Papers. Jeju Island, Korea, 2012: 90-94.

[4] KIRITCHENKO S, ZHU Xiaodan, MOHAMMAD S M. Sentiment analysis of short informal texts[J]. Journal of artificial intelligence research, 2014, 50: 723-762.

[5] LAMPLE G, BALLESTEROS M, SUBRAMANIAN S, et al. Neural architectures for named entity recognition[C]// Proceedings of 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. San Diego, USA, 2016: 260-270.

[6] SHEN Dinghan, MIN M R, LI Yitong, et al. Adaptive convolutional filter generation for natural language understanding. [J]. arXiv: 1709.08294, 2017.

[7] WANG Shuohang, JIANG Jing. Machine comprehension using match-LSTM and answer pointer[C]//Proceedings of International Conference on Learning Representations. Toulon, France, 2017: 1-15.

[8] WANG Wenhui, YANG Nan, WEI Furu, et al. Gated self-matching networks for reading comprehension and question answering[C]//Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics. Vancouver, Canada, 2017: 189-198.

[9] KUMAR A, IRSOY O, ONDRUSKA P, et al. Ask me anything: dynamic memory networks for natural language processing[C]//Proceedings of the 33rd International Conference on Machine Learning. New York, USA, 2016: 1378-1387.

[10] KIM Y. Convolutional neural networks for sentence classification[C]//Proceedings of 2014 Conference on Empirical Methods in Natural Language Processing. Doha, Qatar, 2014: 1746-1751.

[11] KALCHBRENNER N, GREFENSTETTE E, BLUNSOM P, et al. A convolutional neural network for modelling sentences[C]//Proceedings of 52nd Annual Meeting of the Association for Computational Linguistics. Baltimore, USA, 2014: 655-665.

[12] ZHANG Xiang, ZHAO Junbo, LECUN Y. Character-level convolutional networks for text classification[C]// Proceedings of the 28th International Conference on Neural Information Processing Systems. Montreal, Canada, 2015: 649-657.

[13] TANG Duyu, QIN Bing, LIU Ting. Document modeling with gated recurrent neural network for sentiment classification[C]//Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. Lisbon, Portugal, 2015: 1422-1432.

[14] YANG Zichao, YANG Diyi, DYER C, et al. Hierarchical attention networks for document classification[C]//Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. San Diego, USA, 2016: 1480-1489.

[15] ADI Y, KERMANY E, BELINKOV Y, et al. Fine-grained analysis of sentence embeddings using auxiliary prediction tasks[C]//Proceedings of International Conference on Learning Representations. Toulon, France, 2017: 1608-1622.

[16] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[C]//Proceedings of the 31st Conference on Neural Information Processing Systems. Long Beach, USA, 2017: 5998-6008.

[17] WAN Xiaojun. Co-training for cross-lingual sentiment classification[C]//Proceedings of the Joint Conference of the 47th Annual Meeting of the ACL and the 4th International Joint Conference on Natural Language Processing of the AFNLP. Suntec, Singapore, 2009: 235-243.

[18] ZAGIBALOV T, CARROLL J. Automatic seed word selection for unsupervised sentiment classification of Chinese text[C]//Proceedings of the 22nd International Conference on Computational Linguistics. Manchester, United Kingdom, 2008: 1073-1080.

[19] LIU Jiangming, ZHANG Yue. Attention modeling for targeted sentiment[C]//Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics. Valencia, Spain, 2017: 572-577.

[20] ZHANG Lei, WANG Shuai, LIU Bing. Deep learning for sentiment analysis: a survey[J]. WIREs data mining and knowledge discovery, 2018, 8(4): e1253.

[21] CHEN Peng, SUN Zhongqian, BING Lidong, et al. Recurrent attention network on memory for aspect sentiment analysis[C]//Proceedings of 2017 Conference on Empirical Methods in Natural Language Processing. Copenhagen, Denmark, 2017: 452-461.

[22] TANG Duyu, WEI Furu, YANG Nan, et al. Learning sentiment-specific word embedding for twitter sentiment classification[C]//Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics. Baltimore, USA, 2014: 1555-1565.

[23] JOHNSON R, ZHANG Tong. Effective use of word order for text categorization with convolutional neural networks[C]//Proceedings of 2015 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Denver, USA, 2015: 103-112.

[24] SOCHER R, PERELYGIN A, WU J, et al. Recursive deep models for semantic compositionality over a sentiment Treebank[C]//Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing. Seattle, USA, 2013: 1631-1642.

[25] XU K, BA J, KIROS R, et al. Show, attend and tell: neural image caption generation with visual attention[C]//Proceedings of the 32nd International Conference on Machine Learning. Lille, France, 2015: 2048-2057.

[26] BAHDANAU D, CHO K, BENGIO Y. Neural machine translation by jointly learning to align and translate[C]//Proceedings of the 3rd International Conference on Learning Representations, 2014. San Diego, USA, 2015: 473-488.

[27] LUONG T, PHAM H, MANNING C D, et al. Effective approaches to attention-based neural machine translation[C]//Proceedings of 2015 Conference on Empirical Methods in Natural Language Processing. Lisbon, Portugal, 2015: 1412-1421.

[28] ZHOU Xinjie, WAN Xiaojun, XIAO Jianguo. Attention-based LSTM network for cross-lingual sentiment classification[C]//Proceedings of 2016 Conference on Empirical Methods in Natural Language Processing. Austin, USA, 2016: 247-256.

[29] ALLAMANIS M, PENG Hao, SUTTON C A. A convolutional attention network for extreme summarization of source code[C]//Proceedings of 2016 International Conference on Machine Learning. New York, USA, 2016: 2091-2100.

[30] YIN Wenpeng, SCHüTZE H, XIANG Bing, et al. ABCNN: attention-based convolutional neural network for modeling sentence pairs[J]. Transactions of the association for computational linguistics, 2016, 4: 259-272.

[31] WANG Linlin, CAO Zhu, DE MELO G, et al. Relation classification via multi-level attention CNNs[C]//Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics. Berlin, Germany, 2016: 1298-1307.

[32] CHEN Huimin, SUN Maosong, TU Cunchao, et al. Neural sentiment classification with user and product attention [C]//Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing. Austin, USA, 2016: 1650-1659.

[33] FAN Rongen, CHANG Kaiwei, HSIEH C J, et al. LIBLINEAR: a library for large linear classification[J]. Journal of machine learning research, 2008, 9: 1871-1874.

[34] LE Q, MIKOLOV T. Distributed representations of sentences and documents[C]//Proceedings of the 31st International Conference on Machine Learning. Beijing, China, 2014: 1188-1196.

- Similar References:

Memo

-

Last Update:

1900-01-01