[1]ZHAO Rongfeng,LU Baoli,TANG Xiaojiang,et al.Multi-source hybrid-modality dataset and hierarchical fusion classification method for intelligent cockpits[J].CAAI Transactions on Intelligent Systems,2026,21(1):83-94.[doi:10.11992/tis.202507024]

Copy

Multi-source hybrid-modality dataset and hierarchical fusion classification method for intelligent cockpits

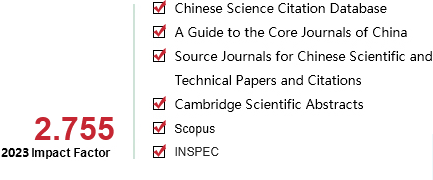

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

83-94

Column:

学术论文—机器学习

Public date:

2026-01-05

- Title:

- Multi-source hybrid-modality dataset and hierarchical fusion classification method for intelligent cockpits

- Keywords:

- intelligent cockpit; dataset; multimodal fusion; visual multimodality; behavior classification; dangerous behavior; behavior recognition; multi-source data

- CLC:

- TP391.4

- DOI:

- 10.11992/tis.202507024

- Abstract:

- The scarcity of open-source data for intelligent cockpits in the driving domain is characterized by limited modality dimensions, insufficient annotations, and restricted scene diversity. To address these challenges, a multi-source hybrid-modality dataset has been constructed. This dataset incorporates RGB, depth, and infrared visual data, along with structured textual data detailing vehicle information and driving scenarios. A dual-layer annotation scheme is applied to capture ten behavior categories. Leveraging this dataset, a hierarchical multi-modal fusion framework is proposed to enhance feature extraction via cross-modal information exchange and semantically guided fusion mechanisms. Experiments on video classification tasks reveal significant improvements in environmental understanding when combining RGB data with additional modalities. Using the full range of modalities leads to a 15.75% increase in accuracy compared to using only RGB data. These results validate the effectiveness of the multi-source hybrid-modality dataset in advancing intelligent cockpit systems.

- References:

-

[1] 郗来乐, 林声浩, 王震, 等. 智能网联汽车自动驾驶安全: 威胁、攻击与防护[J]. 软件学报, 2025, 36(4): 1859-1880 XI Laile, LIN Shenghao, WANG Zhen, et al. Autonomous driving security of intelligent connected vehicles: threats, attacks, and defenses[J]. Journal of software, 2025, 36(4): 1859-1880

[2] 褚万里, 郭鹏, 章捷, 等. 机动车驾驶员疲劳驾驶检测方法研究综述[J]. 电子设计工程, 2025, 33(4): 36-41 CHU Wanli, GUO Peng, ZHANG Jie, et al. Review of research on fatigue driving detection methods for motor vehicle drivers[J]. Electronic design engineering, 2025, 33(4): 36-41

[3] 王润民, 朱宇, 赵祥模, 等. 自动驾驶测试场景研究进展[J]. 交通运输工程学报, 2021, 21(2): 21-37 WANG Runmin, ZHU Yu, ZHAO Xiangmo, et al. Research progress on test scenario of autonomous driving[J]. Journal of traffic and transportation engineering, 2021, 21(2): 21-37

[4] GAO Fei, GE Xiaojun, LI Jinyu, et al. Intelligent cockpits for connected vehicles: taxonomy, architecture, interaction technologies, and future directions[J]. Sensors, 2024, 24(16): 5172

[5] 刘佳雨. 自动-人工驾驶车辆混行下快速路合流区交通安全评价[D]. 哈尔滨: 哈尔滨工业大学, 2021. LIU Jiayu. Traffic safety evaluation of freeway merging areas under mixed traffic of automated and human-driven vehicles[D]. Harbin: Harbin Institute of Technology, 2021.

[6] GRIGORESCU S, TRASNEA B, COCIAS T, et al. A survey of deep learning techniques for autonomous driving[J]. Journal of field robotics, 2020, 37(3): 362-386

[7] BALTRU?AITIS T, AHUJA C, MORENCY L P. Multimodal machine learning: a survey and taxonomy[J]. IEEE transactions on pattern analysis and machine intelligence, 2019, 41(2): 423-443

[8] 张辉, 杜瑞, 钟杭, 等. 电力设施多模态精细化机器人巡检关键技术及应用[J]. 自动化学报, 2025, 51(1): 20-42 ZHANG Hui, DU Rui, ZHONG Hang, et al. The key technology and application of multi-modal fine robot inspection for power facilities[J]. Acta automatica sinica, 2025, 51(1): 20-42

[9] CHEN Long, LI Yuchen, HUANG Chao, et al. Milestones in autonomous driving and intelligent vehicles: survey of surveys[J]. IEEE transactions on intelligent vehicles, 2023, 8(2): 1046-1056

[10] XU Peng, ZHU Xiatian, CLIFTON D A. Multimodal learning with Transformers: a survey[J]. IEEE transactions on pattern analysis and machine intelligence, 2023, 45(10): 12113-12132

[11] SCHULDT C, LAPTEV I, CAPUTO B. Recognizing human actions: a local SVM approach[C]//Proceedings of the 17th International Conference on Pattern Recognition. Piscataway: IEEE, 2004: 32-36.

[12] GORELICK L, BLANK M, SHECHTMAN E, et al. Actions as space-time shapes[J]. IEEE transactions on pattern analysis and machine intelligence, 2007, 29(12): 2247-2253

[13] MARSZALEK M, LAPTEV I, SCHMID C. Actions in context[C]//2009 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2009: 2929-2936.

[14] SOOMRO K, ZAMIR A R, SHAH M. UCF101: a dataset of 101 human actions classes from videos in the wild[EB/OL]. (2012-12-03)[2025-07-24]. https://arxiv.org/abs/1212.0402.

[15] KUEHNE H, JHUANG H, STIEFELHAGEN R, et al. HMDB51: a large video database for human motion recognition[C]//High Performance Computing in Science and Engineering ‘12. Berlin: Springer, 2013: 571-582.

[16] CARREIRA J, ZISSERMAN A. Quo vadis, action recognition? a new model and the kinetics dataset[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2017: 4724-4733.

[17] SHAHROUDY A, LIU Jun, NG T T, et al. NTU RGB+D: a large scale dataset for 3D human activity analysis[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2016: 1010-1019.

[18] GU Chunhui, SUN Chen, ROSS D A, et al. AVA: a video dataset of spatio-temporally localized atomic visual actions[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2018: 6047-6056.

[19] RASOULI A, KOTSERUBA I, TSOTSOS J K. Are they going to cross? a benchmark dataset and baseline for pedestrian crosswalk behavior[C]//2017 IEEE International Conference on Computer Vision Workshops. Piscataway: IEEE, 2018: 206-213.

[20] SUN Pei, KRETZSCHMAR H, DOTIWALLA X, et al. Scalability in perception for autonomous driving: waymo open dataset[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2020: 2443-2451.

[21] CAESAR H, BANKITI V, LANG A H, et al. nuScenes: a multimodal dataset for autonomous driving[EB/OL]. (2020-05-05)[2025-07-24]. https://arxiv.org/abs/1903.11027.

[22] CORDTS M, OMRAN M, RAMOS S, et al. The cityscapes dataset for semantic urban scene understanding[EB/OL]. (2016-04-07)[2025-07-24]. https://arxiv.org/abs/1604.01685.

[23] MARTIN M, ROITBERG A, HAURILET M, et al. Drive&Act: a multi-modal dataset for fine-grained driver behavior recognition in autonomous vehicles[C]//2019 IEEE/CVF International Conference on Computer Vision. Piscataway: IEEE, 2020: 2801-2810.

[24] ORTEGA J D, KOSE N, CA?AS P, et al. DMD: a large-scale multi-modal driver monitoring dataset for attention and alertness analysis[C]//Computer Vision – ECCV 2020 Workshops. Cham: Springer, 2020: 387-405.

[25] ZHAO Chihang, GAO Yongsheng, HE Jie, et al. Recognition of driving postures by multiwavelet transform and multilayer perceptron classifier[J]. Engineering applications of artificial intelligence, 2012, 25(8): 1677-1686

[26] ABOUELNAGA Y, ERAQI H M, MOUSTAFA M N. Real-time distracted driver posture classification[EB/OL]. (2018-11-29)[2025-07-24]. https://arxiv.org/abs/1706.09498.

[27] FEICHTENHOFER C, FAN Haoqi, MALIK J, et al. SlowFast networks for video recognition[C]//2019 IEEE/CVF International Conference on Computer Vision. Seoul: IEEE, 2019: 6201-6210.

[28] WANG Huogen, SONG Zhanjie, LI Wanqing, et al. A hybrid network for large-scale action recognition from RGB and depth modalities[J]. Sensors, 2020, 20(11): 3305

[29] RADFORD A, KIM J W, HALLACY C, et al. Learning transferable visual models from natural language supervision[EB/OL]. (2021-02-26)[2025-07-24]. https://arxiv.org/abs/2103.00020.

[30] CHENG Feng, WANG Xizi, LEI Jie, et al. VindLU: a recipe for effective video-and-language pretraining[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE, 2023: 10739-10750.

[31] LI Kunchang, LI Xinhao, WANG Yi, et al. VideoMamba: state space model forEfficient video understanding[C]//Computer Vision–ECCV 2024. Cham: Springer, 2025: 237-255.

[32] ZHANG Zhengyou. Flexible camera calibration by viewing a plane from unknown orientations[C]//Proceedings of the Seventh IEEE International Conference on Computer Vision. Piscataway: IEEE, 2002: 666-673.

[33] HUANG Zhilin, LIANG Quanmin, YU Yijie, et al. Bilateral event mining and complementary for event stream super-resolution[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 34-43.

- Similar References:

Memo

-

Last Update:

2026-01-05