[1]GONG Yan,WANG Naibang,ZHANG Xinyu,et al.BEV perception technologies and development trends for intelligent connected vehicles[J].CAAI Transactions on Intelligent Systems,2026,21(1):41-59.[doi:10.11992/tis.202505027]

Copy

BEV perception technologies and development trends for intelligent connected vehicles

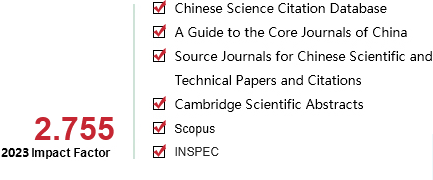

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

41-59

Column:

综述

Public date:

2026-01-05

- Title:

- BEV perception technologies and development trends for intelligent connected vehicles

- Keywords:

- intelligent connected vehicles; vehicle-infrastructure cooperation; cooperative perception; BEV; autonomous driving; dataset; vehicle-to-everything (V2X); multimodal fusion

- CLC:

- TP391.41;U463.6;U495

- DOI:

- 10.11992/tis.202505027

- Abstract:

- Bird’s eye view (BEV) perception has become a fundamental technique for environmental understanding in autonomous driving, due to its unified and interpretable spatial representation. This survey provides a comprehensive review of BEV perception technologies tailored for intelligent connected vehicles. It systematically categorizes existing approaches based on sensor modality and deployment configuration, covering vehicle-side, infrastructure-side, and vehicle-infrastructure cooperative scenarios. The review introduces a multi-dimensional framework encompassing vision-only, LiDAR-only, and multimodal fusion methods, and analyzes representative techniques in terms of their design principles and implementation strategies. In addition, this work presents the first consolidated comparison of BEV-related datasets, detailing their sensor setups, task types, and annotation schemes to support standardized benchmarking. Finally, the survey outlines key challenges—such as open-category recognition, unsupervised learning from large-scale data, and robustness under sensor uncertainty—and explores future directions involving end-to-end autonomous driving, embodied intelligence, and large-model-based cooperative BEV perception systems.

- References:

-

[1] GONG Yan, ZHANG Xinyu, LU Jianli, et al. Steering angle-guided multimodal fusion lane detection for autonomous driving[J]. IEEE transactions on intelligent transportation systems, 2025, 26(2): 1470-1481

[2] BERTOZZ M, BROGGI A, FASCIOLI A. Stereo inverse perspective mapping: theory and applications[J]. Image and vision computing, 1998, 16(8): 585-590

[3] PHILION J, FIDLER S. Lift, splat, shoot: encoding images from arbitrary camera rigs by implicitly unprojecting to 3D[C]//Computer Vision – ECCV 2020. Cham: Springer, 2020: 194-210.

[4] MA Qihang, TAN Xin, QU Yanyun, et al. COTR: compact occupancy Transformer for vision-based 3D occupancy prediction[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 19936-19945.

[5] LI Yinhao, GE Zheng, YU Guanyi, et al. BEVDepth: acquisition of reliable depth for multi-view 3D object detection[J]. Proceedings of the AAAI conference on artificial intelligence, 2023, 37(2): 1477-1485

[6] HUANG Junjie, HUANG Guan, ZHU Zheng, et al. BEVDet: high-performance multi-camera 3D object detection in bird-eye-view[EB/OL]. (2021-12-22)[2025-05-27]. https://arxiv.org/abs/2112.11790.

[7] ZHANG Yunpeng, ZHU Zheng, ZHENG Wenzhao, et al. BEVerse: unified perception and prediction in birds-eye-view for vision-centric autonomous driving[EB/OL]. (2022-05-19)[2025-05-27]. https://arxiv.org/abs/2205.09743.

[8] WANG Yan, CHAO Weilun, GARG D, et al. Pseudo-LiDAR from visual depth estimation: bridging the gap in 3D object detection for autonomous driving[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach: IEEE, 2020: 8437-8445.

[9] GUPTA P, RENGARAJAN R, BANKAPUR V, et al. CVCP-fusion: on implicit depth estimation for 3D bounding box prediction[EB/OL]. (2024-10-16)[2025-05-27]. https://arxiv.org/abs/2410.11211.

[10] LI Zhiqi, WANG Wenhai, LI Hongyang, et al. BEVFormer: learning bird’s-eye-view representation from LiDAR-camera via spatiotemporal transformers[J]. IEEE transactions on pattern analysis and machine intelligence, 2025, 47(3): 2020-2036

[11] LIU Wenxi, LI Qi, YANG Weixiang, et al. Monocular BEV perception of road scenes via front-to-top view projection[J]. IEEE transactions on pattern analysis and machine intelligence, 2024, 46(9): 6109-6125

[12] LU Siyi, HE Lei, LI S E, et al. Hierarchical end-to-end autonomous driving: integrating BEV perception with deep reinforcement learning[C]//2025 IEEE International Conference on Robotics and Automation. Atlanta: IEEE, 2025: 8856-8863.

[13] ZHANG Zhihuang, XU Meng, ZHOU Wenqiang, et al. BEV-Locator: an end-to-end visual semantic localization network using multi-view images[J]. Science China information sciences, 2025, 68(2): 122106

[14] JUN W, LEE S, JUN W, et al. A comparative study and optimization of camera-based BEV segmentation for real-time autonomous driving[J]. Sensors, 2025, 25(7): 2300

[15] LUO Zhipeng, ZHOU Changqing, PAN Liang, et al. Exploring point-BEV fusion for 3D point cloud object tracking with transformer[J]. IEEE transactions on pattern analysis and machine intelligence, 2024, 46(9): 5921-5935

[16] YANG C, LIN Tianwei, HUANG Lichao, et al. WidthFormer: toward efficient transformer-based BEV view transformation[C]//2024 IEEE/RSJ International Conference on Intelligent Robots and Systems. Abu Dhabi: IEEE, 2024: 8457-8464.

[17] DONG Peiyan, KONG Zhenglun, MENG Xin, et al. HotBEV: hardware-oriented transformer-based multi-view 3D detector for BEV perception[J]. Advances in neural information processing systems, 2023, 36: 2824-2836

[18] GONG Yan, LU Jianli, LIU Wenzhuo, et al. SIFDriveNet: speed and image fusion for driving behavior classification network[J]. IEEE transactions on computational social systems, 2024, 11(1): 1244-1259

[19] LI Zhiwei, ZHANG Xinyu, TIAN Chi, et al. TVG-ReID: transformer-based vehicle-graph re-identification[J]. IEEE transactions on intelligent vehicles, 2023, 8(11): 4644-4652

[20] LI Zhiqi, YU Zhiding, WANG Wenhai, et al. FB-BEV: BEV representation from forward-backward view transformations[C]//2023 IEEE/CVF International Conference on Computer Vision. Porte de Versailles: IEEE, 2024: 6896-6905.

[21] LANG Bo, LI Xin, CHUAH M C. BEV-TP: end-to-end visual perception and trajectory prediction for autonomous driving[J]. IEEE transactions on intelligent transportation systems, 2024, 25(11): 18537-18546

[22] LI Zhiqi, WANG Wenhai, LI Hongyang, et al. Bevformer: Learning bird’s-eye-view representation from multi-camera images via spatiotemporal Transformers[EB/OL]. (2022-03-31)[2025-05-27]. https://arxiv. org/abs/2203.17270.

[23] LIU Yingfei, WANG Tiancai, ZHANG Xiangyu, et al. PETR: position embedding transformation for multi-view 3D object detection[C]//Computer Vision–ECCV 2022. Cham: Springer, 2022: 531-548.

[24] LI Yangguang, HUANG Bin, CHEN Zeren, et al. Fast-BEV: a fast and strong bird’s-eye view perception baseline[J]. IEEE transactions on pattern analysis and machine intelligence, 2024, 46(12): 8665-8679

[25] PENG Lang, CHEN Zhirong, FU Zhangjie, et al. BEVSegFormer: bird’s eye view semantic segmentation from arbitrary camera rigs[C]//2023 IEEE/CVF Winter Conference on Applications of Computer Vision. Waikoloa: IEEE, 2023: 5924-5932.

[26] 黄德启, 黄海峰, 黄德意, 等. BEV感知学习在自动驾驶中的应用综述[J]. 计算机工程与应用, 2025, 61(6): 1-21 HUANG Deqi, HUANG Haifeng, HUANG Deyi, et al. Review of application of BEV perceptual learning in autonomous driving[J]. Computer engineering and applications, 2025, 61(6): 1-21

[27] 时培成, 董心龙, 杨爱喜, 等. 面向自动驾驶的BEV感知算法研究进展[J]. 华中科技大学学报(自然科学版), 2025, 53(5): 104-127 SHI Peicheng, DONG Xinlong, YANG Aixi, et al. Research progress on BEV perception algorithms for autonomous driving: a review[J]. Journal of Huazhong University of Science and Technology (nature science edition), 2025, 53(5): 104-127

[28] 肖荣春, 刘元盛, 张军, 等. BEV融合感知算法综述[C]//中国计算机用户协会网络应用分会2023年第二十七届网络新技术与应用年会论文集. 北京: 北京市信息服务工程重点实验室, 2023: 68-72. XIAO Rongchun, LIU Yuansheng, ZHANG Jun, et al. Comprehensive review of bird’s eye view fusion perception algorithms[C]//Proceedings of the 27th Annual Conference on Network New Technologies and Applications of China Computer Users Association Network Application Branch, 2023. Beijing: Beijing Key Laboratory of Information Service Engineering, 2023: 68-72.

[29] 周松燃, 卢烨昊, 励雪巍, 等. 车路两端纯视觉鸟瞰图感知研究综述[J]. 中国图象图形学报, 2024, 29(5): 1169-1187 ZHOU Songran, LU Yehao, LI Xuewei, et al. Pure camera-based bird’s-eye-view perception in vehicle side and infrastructure side: a review[J]. Journal of image and graphics, 2024, 29(5): 1169-1187

[30] YANG Lei, TANG Tao, LI Jun, et al. Bevheight++: toward robust visual centric 3D object detection[J]. IEEE transactions on pattern analysis and machine intelligence, 2025, 47(6): 5094-5111

[31] SHI Haobo, HOU Dezao, LI Xiyao, et al. Center-aware 3D object detection with attention mechanism based on roadside LiDAR[J]. Sustainability, 2023, 15(3): 2628

[32] LI Xiaohai, ZHANG Jieyao, GU Jiaming, et al. BEVRoad: a cross-modal and temporary-recurrent 3D object detector for infrastructure perception[C]//Neural Information Processing. Singapore: Springer, 2026: 270-284.

[33] YANG Lei, YU Kaicheng, TANG Tao, et al. BEVHeight: a robust framework for vision-based roadside 3D object detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 21611-21620.

[34] SHI Hao, PANG Chengshan, ZHANG Jiaming, et al. CoBEV: elevating roadside 3D object detection with depth and height complementarity[J]. IEEE transactions on image processing, 2024, 33: 5424-5439

[35] WANG Wenjie, LU Yehao, ZHENG Guangcong, et al. BEVSpread: spread voxel pooling for bird’s-eye-view representation in vision-based roadside 3D object detection[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 14718-14727.

[36] FAN Siqi, WANG Zhe, HUO Xiaoliang, et al. Calibration-free BEV representation for infrastructure perception[C]//2023 IEEE/RSJ International Conference on Intelligent Robots and Systems. Detroit: IEEE, 2023: 9008-9013.

[37] JIA Jinrang, YI Guangqi, SHI Yifeng. RopeBEV: a multi-camera roadside perception network in bird’s-eye-view[EB/OL]. (2024-09-18)[2025-05-27]. https://arxiv.org/abs/2409.11706.

[38] ZHANG Tianya, JIN P J. Roadside LiDAR vehicle detection and tracking using range and intensity background subtraction[J]. Journal of advanced transportation, 2022, 2022: 2771085

[39] WU Jianqing, XU Hao, ZHENG Jianying. Automatic background filtering and lane identification with roadside LiDAR data[C]//2017 IEEE 20th International Conference on Intelligent Transportation Systems. Mielparque Yokohama: IEEE, 2018: 1-6.

[40] LIN Ciyun, GUO Yingzhi, LI Wenjun, et al. An automatic lane marking detection method with low-density roadside LiDAR data[J]. IEEE sensors journal, 2021, 21(8): 10029-10038

[41] ZHAO Junxuan, XU Hao, LIU Hongchao, et al. Detection and tracking of pedestrians and vehicles using roadside LiDAR sensors[J]. Transportation research part C: emerging technologies, 2019, 100: 68-87

[42] CUI Yuepeng, XU Hao, WU Jianqing, et al. Automatic vehicle tracking with roadside LiDAR data for the connected-vehicles system[J]. IEEE intelligent systems, 2019, 34(3): 44-51

[43] WU Jianqing, XU Hao, ZHAO Junxuan. Automatic lane identification using the roadside LiDAR sensors[J]. IEEE intelligent transportation systems magazine, 2020, 12(1): 25-34

[44] XU Shaoqing, LI Fang, HUANG Peixiang, et al. TiGDistill-BEV: multi-view BEV 3D object detection via target inner-geometry learning distillation[J]. IEEE transactions on circuits and systems for video technology, 2026, 36(1): 846-860.

[45] ZHANG Xinyu, GONG Yan, LU Jianli, et al. Multi-modal fusion technology based on vehicle information: a survey[J]. IEEE transactions on intelligent vehicles, 2023, 8(6): 3605-3619

[46] CHEN Yaqing, WANG Huaming. Accurate and robust roadside 3-D object detection based on height-aware scene reconstruction[J]. IEEE sensors journal, 2024, 24(19): 30643-30653

[47] WANG Shujian, PI Rendong, LI Jian, et al. Object tracking based on the fusion of roadside LiDAR and camera data[J]. IEEE transactions on instrumentation and measurement, 2022, 71: 7006814

[48] SONG Ziying, LIU Lin, JIA Feiyang, et al. Robustness-aware 3D object detection in autonomous driving: a review and outlook[J]. IEEE transactions on intelligent transportation systems, 2024, 25(11): 15407-15436

[49] 周一青, 张浩岳, 齐彦丽, 等. 基于感通算融合和信息年龄优化的车联网多节点协同感知[J]. 通信学报, 2024, 45(3): 1-16 ZHOU Yiqing, ZHANG Haoyue, QI Yanli, et al. AoI-enabled multi-node cooperative sensing based on integration of sensing, communication, and computing in vehicular networks[J]. Journal on communications, 2024, 45(3): 1-16

[50] 夏春星, 刘建航, 狄永锟, 等. 基于网络孪生的车路协同感知共享方案[J]. 计算机应用研究, 2025, 42(5): 1363-1369 XIA Chunxing, LIU Jianhang, DI Yongkun, et al. Perception sharing scheme of vehicle-road cooperation based on cybertwin[J]. Application research of computers, 2025, 42(5): 1363-1369

[51] 张新钰, 卢毅果, 高鑫, 等. 面向智能网联汽车的车路协同感知技术及发展趋势[J]. 自动化学报, 2025, 51(2): 233-248 ZHANG Xinyu, LU Yiguo, GAO Xin, et al. Vehicle-road collaborative perception technology and development trend for intelligent connected vehicles[J]. Acta automatica sinica, 2025, 51(2): 233-248

[52] WANG Zhe, FAN Siqi, HUO Xiaoliang, et al. VIMI: vehicle-infrastructure multi-view intermediate fusion for camera-based 3D object detection[EB/OL]. (2023-03-20)[2025-05-27]. https://arxiv.org/abs/2303.10975.

[53] LI Bin, ZHAO Yanan, TAN Huachun, et al. CoFormerNet: a Transformer-based fusion approach for enhanced vehicle-infrastructure cooperative perception[J]. Sensors, 2024, 24(13): 4101

[54] ZHOU Linyi, GAN Zhongxue, FAN Jiayuan. CenterCoop: center-based feature aggregation for communication-efficient vehicle-infrastructure cooperative 3D object detection[J]. IEEE robotics and automation letters, 2024, 9(4): 3570-3577

[55] GONG Yan, ZHANG Xinyu, LIU Hao, et al. SkipcrossNets: adaptive skip-cross fusion for road detection[J]. Automotive innovation, 2025, 8(2): 368-384

[56] ZHANG Haolin, MEKALA M S, YANG Dongfang, et al. 3D harmonic loss: towards task-consistent and time-friendly 3D object detection on edge for V2X orchestration[J]. IEEE transactions on vehicular technology, 2023, 72(12): 15268-15279

[57] GONG Yan, WANG Lu, XU Lisheng. A feature aggregation network for multispectral pedestrian detection[J]. Applied intelligence, 2023, 53(19): 22117-22131

[58] XIANG Chao, XIE Xiaopo, FENG Chen, et al. V2I-BEVF: multi-modal fusion based on BEV representation for vehicle-infrastructure perception[C]//2023 IEEE 26th International Conference on Intelligent Transportation Systems. Bilbao: IEEE, 2024: 5292-5299.

[59] CHEN Runjian, MU Yao, XU Runsen, et al. CO3: cooperative unsupervised 3D representation learning for autonomous driving[EB/OL]. (2022-06-08)[2025-05-27]. https://arxiv.org/abs/2206.04028.

[60] YU Hang, ZHAO Yongsheng, ZOU Ying, et al. Multistage fusion approach of lidar and camera for vehicle-infrastructure cooperative object detection[C]//2022 5th World Conference on Mechanical Engineering and Intelligent Manufacturing. Maanshan: IEEE, 2023: 811-816.

[61] JIAO Yang, JIE Zequn, CHEN Shaoxiang, et al. MSMDFusion: fusing LiDAR and camera at multiple scales with multi-depth seeds for 3D object detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 21643-21652.

[62] YU Haibao, TANG Yingjuan, XIE Enze, et al. Vehicle-infrastructure cooperative 3D object detection via feature flow prediction[EB/OL]. (2023-03-19)[2025-05-27]. https://arxiv.org/abs/2303.10552.

[63] GONG Yan, JIANG Xinmin, WANG Lu, et al. TCLaneNet: task-conditioned lane detection network driven by vibration information[J]. IEEE transactions on intelligent vehicles, 2024, 9(9): 5680-5693

[64] HU Yue, LU Yifan, XU Runsheng, et al. Collaboration helps camera overtake LiDAR in 3D detection[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 9243-9252.

[65] XU Runsheng, TU Zhengzhong, XIANG Hao, et al. CoBEVT: cooperative bird’s eye view semantic segmentation with sparse transformers[EB/OL]. (2022-07-05)[2025-05-27]. https://arxiv.org/abs/2207.02202.

[66] R??LE D, GERNER J, BOGENBERGER K, et al. Unlocking past information: temporal embeddings in cooperative bird’s eye view prediction[C]//2024 IEEE Intelligent Vehicles Symposium. Jeju Island: IEEE, 2024: 2220-2225.

[67] WEI Sizhe, WEI Yuxi, HU Yue, et al. Asynchrony-robust collaborative perception via bird’s eye view flow[J]. Advances in neural information processing systems, 2023, 36: 28462-28477

[68] WANG T H, MANIVASAGAM S, LIANG Ming, et al. V2VNet: vehicle-to-vehicle communication for joint perception and prediction[C]//Computer Vision – ECCV 2020. Cham: Springer International Publishing, 2020: 605-621.

[69] LI Jinlong, XU Runsheng, LIU Xinyu, et al. Learning for vehicle-to-vehicle cooperative perception under lossy communication[J]. IEEE transactions on intelligent vehicles, 2023, 8(4): 2650-2660

[70] QIAO Donghao, ZULKERNINE F, ANAND A. CoBEVFusion cooperative perception with LiDAR-camera bird’s eye view fusion[C]//2024 International Conference on Digital Image Computing: Techniques and Applications. Perth: IEEE, 2025: 389-396.

[71] XIANG Hao, XU Runsheng, MA Jiaqi. HM-ViT: hetero-modal vehicle-to-vehicle cooperative perception with vision Transformer[C]//2023 IEEE/CVF International Conference on Computer Vision. Paris: IEEE, 2024: 284-295.

[72] SHI Shanwei, ZHANG Chaokun, LV Aojia, et al. MCoT: multi-modal vehicle-to-vehicle cooperative perception with Transformers[C]//2023 IEEE 29th International Conference on Parallel and Distributed Systems. Ocean Flower Island: IEEE, 2024: 1612-1619.

[73] YIN Hongbo, TIAN Daxin, LIN Chunmian, et al. V2vformer++: multi-modal vehicle-to-vehicle cooperative perception via global-local transformer[J]. IEEE transactions on intelligent transportation systems, 2024, 25(2): 2153-2166

[74] LIU Wenzhuo, GONG Yan, ZHANG Guoying, et al. GLMDriveNet: global–local multimodal fusion driving behavior classification network[J]. Engineering applications of artificial intelligence, 2024, 129: 107575

[75] SONG Ziying, YANG Lei, XU Shaoqing, et al. GraphBEV: towards robust BEV feature alignment for multi-modal 3D object detection[C]//Computer Vision–ECCV 2024. Cham: Springer, 2025: 347-366.

[76] CHANG Cheng, ZHANG Jiawei, ZHANG Kunpeng, et al. BEV-V2X: cooperative birds-eye-view fusion and grid occupancy prediction via V2X-based data sharing[J]. IEEE transactions on intelligent vehicles, 2023, 8(11): 4498-4514

[77] ZHANG Caiji, TIAN Bin, MENG Shi, et al. V2X-BGN: camera-based V2X-collaborative 3D object detection with BEV global non-maximum suppression[C]//2024 IEEE Intelligent Vehicles Symposium. Jeju Island: IEEE, 2024: 602-607.

[78] LIU Chang, ZHU Mingxu, MA Cong. H-V2X: a large scale highway dataset for BEV perception[C]//Computer Vision – ECCV 2024. Cham: Springer, 2025: 139-157.

[79] ZHANG Xiaofei, LI Yining, WANG Jinping, et al. InScope: a new real-world 3D infrastructure-side collaborative perception dataset for open traffic scenarios[J]. Information fusion, 2025, 128: 103951

[80] BI Jiangfeng, WEI Haiyue, ZHANG Guoxin, et al. DyFusion: cross-attention 3D object detection with dynamic fusion[J]. IEEE Latin America transactions, 2024, 22(2): 106-112

[81] WANG Naibang, SHANG Deyong, GONG Yan, et al. Collaborative perception datasets for autonomous driving: a review[EB/OL]. (2025-04-17)[2025-05-27]. https://arxiv.org/abs/2504.12696.

[82] ZHANG Xinyu, LI Zhiwei, GONG Yan, et al. OpenMPD: an open multimodal perception dataset for autonomous driving[J]. IEEE transactions on vehicular technology, 2022, 71(3): 2437-2447

[83] TANG Zheng, NAPHADE M, LIU Mingyu, et al. CityFlow: a city-scale benchmark for multi-target multi-camera vehicle tracking and re-identification[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach: IEEE, 2020: 8789-8798.

[84] ZHAN Wei, SUN Liting, WANG Di, et al. INTERACTION dataset: an international, adversarial and cooperative motion dataset in interactive driving scenarios with semantic maps[EB/OL]. (2019-09-30)[2025-05-27]. https://arxiv.org/abs/1910.03088.

[85] WANG Huanan, ZHANG Xinyu, LI Zhiwei, et al. IPS300+: a challenging multi-modal data sets for intersection perception system[C]//2022 International Conference on Robotics and Automation. Philadelphia: IEEE, 2022: 2539-2545.

[86] ZIMMER W, CRE? C, NGUYEN H T, et al. TUMTraf intersection dataset: all you need for urban 3D camera-LiDAR roadside perception[C]//2023 IEEE 26th International Conference on Intelligent Transportation Systems. Bilbao: IEEE, 2024: 1030-1037.

[87] CRE? C, ZIMMER W, STRAND L, et al. A9-dataset: multi-sensor infrastructure-based dataset for mobility research[C]//2022 IEEE Intelligent Vehicles Symposium. Aachen: IEEE, 2022: 965-970.

[88] ZHU Xiaosu, SHENG Hualian, CAI Sijia, et al. RoScenes: a large-scale multi-view 3D dataset for roadside perception[C]//Computer Vision – ECCV 2024. Cham: Springer, 2025: 331-347.

[89] YE Xiaoqing, SHU Mao, LI Hanyu, et al. Rope3D: the roadside perception dataset for autonomous driving and monocular 3D object detection task[C]//2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 21309-21318.

[90] ARNOLD E, DIANATI M, DE TEMPLE R, et al. Cooperative perception for 3D object detection in driving scenarios using infrastructure sensors[J]. IEEE transactions on intelligent transportation systems, 2022, 23(3): 1852-1864

[91] YU Haibao, LUO Yizhen, SHU Mao, et al. DAIR-V2X: a large-scale dataset for vehicle-infrastructure cooperative 3D object detection[C]//2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 21329-21338.

[92] YU Haibao, YANG Wenxian, RUAN Hongzhi, et al. V2X-seq: a large-scale sequential dataset for vehicle-infrastructure cooperative perception and forecasting[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 5486-5495.

[93] MA Cong, QIAO Lei, ZHU Chengkai, et al. HoloVic: large-scale dataset and benchmark for multi-sensor holographic intersection and vehicle-infrastructure cooperative[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 22129-22138.

[94] ZHU He, WANG Yunkai, KONG Quyu, et al. OTVIC: a dataset with online transmission for vehicle-to-infrastructure cooperative 3D object detection[C]//2024 IEEE/RSJ International Conference on Intelligent Robots and Systems. Abu Dhabi: IEEE, 2024: 10732-10739.

[95] WANG Hai, NIU Yaqing, CHEN Long, et al. DAIR-V2XReid: a new real-world vehicle-infrastructure cooperative re-ID dataset and cross-shot feature aggregation network perception method[J]. IEEE transactions on intelligent transportation systems, 2024, 25(8): 9058-9068

[96] ZIMMER W, WARDANA G A, SRITHARAN S, et al. TUMTraf V2X cooperative perception dataset[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 22668-22677.

[97] YANG Lei, ZHANG Xinyu, LI Jun, et al. V2X-radar: a multi-modal dataset with 4D radar for cooperative perception[EB/OL]. (2024-11-17)[2025-05-27]. https://arxiv.org/abs/2411.10962.

[98] XU Runsheng, XIANG Hao, XIA Xin, et al. Opv2v: an open benchmark dataset and fusion pipeline for perception with vehicle-to-vehicle communication[C]//2022 International Conference on Robotics and Automation. Philadelphia: IEEE, 2022: 2583-2589.

[99] LU Yifan, HU Yue, ZHONG Yiqi, et al. An extensible framework for open heterogeneous collaborative perception[EB/OL]. (2024-01-25)[2025-05-27]. https://arxiv.org/abs/2401.13964.

[100] XU Runsheng, XIA Xin, LI Jinlong, et al. V2V4Real: a real-world large-scale dataset for vehicle-to-vehicle cooperative perception[C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 13712-13722.

[101] CHIU H K, HACHIUMA R, WANG C Y, et al. V2V-LLM: vehicle-to-vehicle cooperative autonomous driving with multi-modal large language models[EB/OL]. (2024-02-14)[2025-05-27]. https://arxiv.org/abs/2502.09980.

[102] LI Yiming, LI Zhiheng, CHEN Nuo, et al. Multiagent multitraversal multimodal self-driving: open MARS dataset[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 22041-22051.

[103] LI Yiming, MA Dekun, AN Ziyan, et al. V2X-sim: multi-agent collaborative perception dataset and benchmark for autonomous driving[J]. IEEE robotics and automation letters, 2022, 7(4): 10914-10921

[104] MAO Ruiqing, GUO Jingyu, JIA Yukuan, et al. Dolphins: dataset for collaborative perception enabled harmonious and interconnected self-driving[C]//Computer Vision – ACCV 2022. Cham: Springer, 2023: 495-511.

[105] KARVAT M, GIVIGI S. Adver-city: open-source multi-modal dataset for collaborative perception under adverse weather conditions[EB/OL]. (2024-10-08)[2025-05-27]. https://arxiv.org/abs/2410.06380.

[106] XU Runsheng, XIANG Hao, TU Zhengzhong, et al. V2X-ViT: vehicle-to-everything cooperative perception with vision Transformer[C]//Computer Vision – ECCV 2022. Cham: Springer, 2022: 107-124.

[107] HUANG Xun, WANG Jinlong, XIA Qiming, et al. V2X-R: cooperative LiDAR-4D radar fusion with denoising diffusion for 3D object detection[EB/OL]. (2024-11-13)[2025-05-27]. https://arxiv.org/abs/2411.08402.

[108] WANG Tianqi, KIM S, JI Wenxuan, et al. DeepAccident: a motion and accident prediction benchmark for V2X autonomous driving[J]. Proceedings of the AAAI conference on artificial intelligence, 2024, 38(6): 5599-5606

[109] LI Rongsong, PEI Xin. Multi-V2X: a large scale multi-modal multi-penetration-rate dataset for cooperative perception[EB/OL]. (2024-09-08)[2025-05-27]. https://arxiv.org/abs/2409.04980.

[110] XIANG Hao, ZHENG Zhaoliang, XIA Xin, et al. V2X-real: a largs-scale dataset for vehicle-to-everything cooperative perception[C]//Computer Vision – ECCV 2024. Cham: Springer, 2025: 455-470.

[111] ZHOU Zewei, XIANG Hao, ZHENG Zhaoliang, et al. V2XPnP: vehicle-to-everything spatio-temporal fusion for multi-agent perception and prediction[EB/OL]. (2024-12-02)[2025-05-27]. https://arxiv.org/abs/2412.01812.

[112] FAN Siqi, NIE Zaiqing, RUAN Hongzhi, et al. Learning cooperative trajectory representations for motion forecasting[J]. Advances in neural information processing systems, 2024, 37: 13430-13457

[113] LUO K Z, DAO M Q, LIU Zhenzhen, et al. Mixed signals: a diverse point cloud dataset for heterogeneous LiDAR V2X collaboration[EB/OL]. (2025-02-19)[2025-05-27]. https://arxiv.org/abs/2502.14156.

[114] HAO Ruiyang, FAN Siqi, DAI Yingru, et al. RCooper: a real-world large-scale dataset for roadside cooperative perception[C]//2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2024: 22347-22357.

[115] 崔明阳, 黄荷叶, 许庆, 等. 智能网联汽车架构、功能与应用关键技术[J]. 清华大学学报(自然科学版), 2022, 62(3): 493-508 CUI Mingyang, HUANG Heye, XU Qing, et al. Survey of intelligent and connected vehicle technologies: Architectures, functions and applications[J]. Journal of Tsinghua University(science and technology), 2022, 62(3): 493-508

[116] 沈甜雨, 陶子锐, 王亚东, 等. 具身智能研究的关键问题: 自主感知、行动与进化[J]. 自动化学报, 2025, 51(1): 43-71 SHEN Tianyu, TAO Zirui, WANG Yadong, et al. Key problems of embodied intelligence research: Autonomous perception, action, and evolution[J]. Acta automatica sinica, 2025, 51(1): 43-71

[117] 王文晟, 谭宁, 黄凯, 等. 基于大模型的具身智能系统综述[J]. 自动化学报, 2025, 51(1): 1-19. WANG Wensheng, TAN Ning, HUANG Kai, et al Embodied intelligence systems based on large models: A survey[J]. Acta automatica sinica, 2025, 51(1): 1-19.

[118] LIN Lei, FU Jiayi, LIU Pengli, et al. Just ask one more time! self-agreement improves reasoning of language models in (almost) all scenarios[C]//Findings of the Association for Computational Linguistics ACL 2024. Stroudsburg: ACL, 2024: 3829-3852.

- Similar References:

Memo

-

Last Update:

2026-01-05