[1]MENG Xiang,WANG Boyue,GAO Yihan,et al.Visual-language key clue discovery-based multimodal fake news detection model[J].CAAI Transactions on Intelligent Systems,2026,21(1):109-119.[doi:10.11992/tis.202505007]

Copy

Visual-language key clue discovery-based multimodal fake news detection model

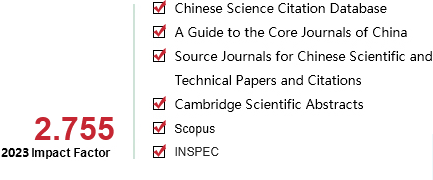

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

109-119

Column:

ѧ�����ġ�������֪��ģʽʶ��

Public date:

2026-01-05

- Title:

- Visual-language key clue discovery-based multimodal fake news detection model

- Keywords:

- multimodal fake news detection; multi-scale feature interaction; key clue discovery; fine-grained representation; cross-modal attention; global feature alignment; memory-enhanced mechanism; semantic inconsistency detection

- CLC:

- TP391.1

- DOI:

- 10.11992/tis.202505007

- Abstract:

- Multimodal fake news detection aims to enhance the reliability of authenticity assessment by integrating diverse modalities such as text, images, videos, and audio. However, existing models often overlook discriminative local details and struggle to capture the critical inconsistencies between textual and visual content. To address these challenges, this study proposes a novel multimodal fake news detection model, termed the visual-language key clue discovery-based multimodal fake news detection model (VKC-MFND), which is designed to discover key visual-linguistic cues. The model comprises three main components: a multi-scale feature extraction module, a key feature information extraction module, and a multi-scale feature alignment module. Specifically, the multi-scale feature extraction module captures both global features (sentence-level or description-level) and local features (word-level or object box-level) from text and images, thereby enriching the diversity of information representation. The key feature information extraction module utilizes attention-based interactions among fine-grained features to uncover discriminative clues and aligns them with global semantic representations, facilitating the fusion of critical cross-modal information. Meanwhile, the multi-scale feature alignment module optimizes the model using both classification and alignment losses, enhancing semantic consistency in the shared feature space. Extensive experiments conducted on three benchmark multimodal fake news datasets��Weibo, Weibo-19, and Pheme��demonstrate that the proposed model significantly outperforms state-of-the-art approaches. Further ablation studies confirm the effectiveness and necessity of each component in the model.

- References:

-

[1] VOSOUGHI S, ROY D, ARAL S. The spread of true and false news online[J]. Science, 2018, 359(6380): 1146-1151

[2] CAO Juan, QI Peng, SHENG Qiang, et al. Exploring the role of visual content in fake news detection[EB/OL]. (2020-03-11)[2025-04-20]. https://arxiv.org/abs/2003.05096.

[3] ZHANG Xichen, GHORBANI A A. An overview of online fake news: Characterization, detection, and discussion[J]. Information processing & management, 2020, 57(2): 102025

[4] ZHANG Zhenyu, ZHANG Lei, YANG Dingqi, et al. KRAN: knowledge refining attention network for recommendation[J]. ACM transactions on knowledge discovery from data, 2022, 16(2): 1-20

[5] NAN Qiong, CAO Juan, ZHU Yongchun, et al. MDFEND: multi-domain fake news detection[C]//Proceedings of the 30th ACM International Conference on Information & Knowledge Management. Virtual Event: ACM, 2021.

[6] RADFORD A, KIM J K, HALLACY C, et al. Learning transferable visual models from natural language supervision[C]//International Conference on Machine Learning. Online: ICML, 2021.

[7] WU Yang, ZHAN Pengwei, ZHANG Yunjian, et al. Multimodal fusion with co-attention networks for fake news detection[C]//Findings of the Association for Computational Linguistics, Stroudsburg: USAACL, 2021.

[8] ����Ȼ, Ԭ����, ����Ȫ, ��. ���ڳ�ͼ˫��ע�������ƵĶ�ģ̬ҥ�Լ��ģ��[J]. �������ѧ��̽��, 2025, 19(11): 3033-3045 WANG Anran, YUAN Deyu, PAN Yuquan, et al. Multimodal rumor detection model based on hypergraph dual attention mechanism[J]. Journal of frontiers of computer science and technology, 2025, 19(11): 3033-3045

[9] QIAN Shengsheng, WANG Jinguang, HU Jun, et al. Hierarchical multi-modal contextual attention network for fake news detection[C]//Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval. Virtual Even: ACM, 2021.

[10] ���η�, ������, ������. �罻ý������ż��: �������ۡ��������о�����[J]. ��������, 2024, 23(9): 31-40. ZHAO Mengfan, ZHANG Yutao, ZHAO Tingzhao, et al. Social media fake news detection: basic theories, methods, and research directions[J]. Software guide, 23(9): 31�C40.

[11] ���, ��͢��, ����, ��. ���ڶ�ģ̬����Ӧ�ںϵĶ���Ƶ������ż��[J]. �������ѧ, 2024, 51(11): 39-46. ZHU Feng, ZHANG Tinghui, LI Peng, et al. Multimodal adaptive fusion-based short video fake news detection[J]. Computer science, 51(11): 39-46.

[12] QI Peng, CAO Juan, LI Xirong, et al. Improving fake news detection by using an entity-enhanced framework to fuse diverse multimodal clues[C]//Proceedings of the 29th ACM International Conference on Multimedia. Chengdu: ACM, 2021.

[13] CHEN Yixuan, LI Dongsheng, ZHANG Peng, et al. Cross-modal ambiguity learning for multimodal fake news detection[C]//Proceedings of the ACM Web Conference 2022. Virtual Event: ACM, 2022.

[14] YING Qichao, HU Xiaoxiao, ZHOU Yangming, et al. Bootstrapping multi-view representations for fake news detection[C]//Proceedings of the AAAI Conference on Artificial Intelligence. Washington: AAAI, 2023.

[15] ZHENG Jiaqi, ZHANG Xi, GUO Sanchuan, et al. MFAN: multi-modal feature-enhanced attention networks for rumor detection[C]//Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence. Vienna: International Joint Conferences on Artificial Intelligence Organization, 2022.

[16] ���㴨, ���, ���, ��. ���ڿ�ģ̬�����������ں�����ļ����ż�ⷽ��[J]. �������ѧ, 2024, 51(11): 23-29. PENG Guangchuan, WU Fei, HAN Lu, et al. A fake news detection method based on cross-modal interaction and feature fusion network[J]. Computer science, 51(11), 23�C29.

[17] ������, ����ΰ. ���ھֲ���ȫ�������ۺϵ�������ż�ⷽ��[J]. �����������Ӧ��, 2025, 61(9): 139-147 YANG Shuxin, DING Qiwei. False news detection method based on local and global feature aggregation[J]. Computer engineering and applications, 2025, 61(9): 139-147

[18] LIU Xuannan, LI Peipei, HUANG Huaibo, et al. FKA-owl: advancing multimodal fake news detection through knowledge-augmented LVLMs[C]//Proceedings of the 32nd ACM International Conference on Multimedia. Melbourne: ACM, 2024.

[19] ZHANG Pengchuan, LI Xiujun, HU Xiaowei, et al. VinVL: revisiting visual representations in vision-language models[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Nashville: IEEE, 2021.

[20] Ԭ�h, ������, ŷ����Ƽ, ��. ����һ�Զ��ϵ�Ķ�ģ̬������ż��[J]. ������Ϣѧ��, 2023, 37(9): 131-139 YUAN Yue, LIU Yongbin, OUYANG Chunping, et al. Multimodal fake news detection based on one-to-many relationships[J]. Journal of Chinese information processing, 2023, 37(9): 131-139

[21] KIM W, SON B, KIM I. Vilt: vision-and-language transformer without convolution or region supervision[C]//International Conference on Machine Learning. Online: PMLR, 2021.

[22] WANG Peng, YANG An, MEN Rui, et al. OFA: unifying architectures, tasks, and modalities through a simple sequence-to-sequence learning framework[C]//International Conference on Machine Learning. Baltimore: PMLR, 2022.

[23] ACHIAM J, ADLER S, AGARWAL S, et al. Gpt-4 technical report[EB/OL]. (2024-03-04)[2025-04-20]. https://arxiv.org/abs/2303.08774.

[24] �����, ����, ��Ƽ. ����Ԥѵ���Ͷ�ģ̬�ںϵļ����ż��[J]. ���������, 2024, 50(1): 289-295 ZHOU Haowei, LIU Yong, XUAN Ping. Fake news detection based on pretraining and multimodal fusion[J]. Computer engineering, 2024, 50(1): 289-295

[25] WANG Yaqing, MA Fenglong, JIN Zhiwei, et al. EANN: event adversarial neural networks for multi-modal fake news detection[C]//Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. London: ACM, 2018.

[26] KHATTAR D, GOUD J S, GUPTA M, et al. MVAE: multimodal variational autoencoder for fake news detection[C]//The World Wide Web Conference. San Francisco: ACM, 2019.

[27] SINGHAL S, SHAH R R, CHAKRABORTY T, et al. SpotFake: a multi-modal framework for fake news detection[C]//2019 IEEE Fifth International Conference on Multimedia Big Data. Singapore: IEEE, 2019.

[28] SINGHAL S, KABRA A, SHARMA M, et al. SpotFake+: a multimodal framework for fake news detection via transfer learning (student abstract)[C]//34th AAAI Conference on Artificial Intelligence. New York: AAAI, 2020.

[29] LI Jun, BIN Yi, ZOU Jie, et al. Cross-modal consistency learning with fine-grained fusion network for multimodal fake news detection[C]//Proceedings of the 5th ACM International Conference on Multimedia in Asia. New York: Association for Computing Machinery, 2023.

[30] ZHOU Xinyi, WU Jindi, ZAFARANI R, et al. SAFE: similarity-aware multi-modal fake news detection[C]//Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, Online: ACL, 2020.

[31] ZHENG Jiaqi, ZHANG Xi, GUO Sanchuan, et al. MFAN: multi-modal feature-enhanced attention networks for rumor detection[C]//International Joint Conference on Artificial Intelligence. Vienna: IJCAI, 2022.

[32] CHEN Yanchun, ZHANG Yuan, ZHANG Mengnan, et al. Consumption of coffee and tea with all-cause and cause-specific mortality: a prospective cohort study[J]. BMC medicine, 2022, 20(1): 449

[33] WANG Longzheng, ZHANG Chuang, XU Hongbo, et al. Cross-modal contrastive learning for multimodal fake news detection[C]//Proceedings of the 31st ACM International Conference on Multimedia. Ottawa: ACM, 2023.

[34] LI Bo, ZHANG Yuanhan, GUO Dong, et al. LLaVA-onevision: easy visual task transfer[EB/OL]. (2024-10-26)[2025-01-20]. https://arxiv.org/abs/2408.03326.

[35] LIU Yihan, OTT M, GOYAL N, et al. Roberta: a robustly optimized bert pretraining approach[EB/OL]. (2019-07-26)[2025-04-20]. https://arxiv.org/abs/1907.11692.

[36] JIN Zhiwei, CAO Juan, GUO Han, et al. Multimodal fusion with recurrent neural networks for rumor detection on microblogs[C]//Proceedings of the 25th ACM International Conference on Multimedia. Mountain View: ACM, 2017.

[37] SONG Changhe, YANG Cheng, CHEN Huimin, et al. CED: credible early detection of social media rumors[J]. IEEE transactions on knowledge and data engineering, 2021, 33(8): 3035-3047

[38] ZUBIAGA A, LIAKATA M, PROCTER R. Exploiting context for rumour detection in social media[C]//The 9th International Conference on Social Informatics. Oxford: Springer International Publishing, 2017.

- Similar References:

Memo

-

Last Update:

2026-01-05