[1]LIU Shiyi,LIU Jinping,HUANG Lijuan,et al.Infrared and visible image fusion based on multi-scale coordinated convolution and adaptive weighting[J].CAAI Transactions on Intelligent Systems,2026,21(1):95-108.[doi:10.11992/tis.202504002]

Copy

Infrared and visible image fusion based on multi-scale coordinated convolution and adaptive weighting

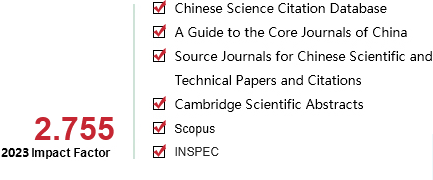

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 1

Page number:

95-108

Column:

ѧ�����ġ�������֪��ģʽʶ��

Public date:

2026-01-05

- Title:

- Infrared and visible image fusion based on multi-scale coordinated convolution and adaptive weighting

- Keywords:

- image fusion; infrared image; visible image; multiscale coordinate convolution; convolutional multilayer perceptron; coordinate attention; adaptive weighting

- CLC:

- TP391

- DOI:

- 10.11992/tis.202504002

- Abstract:

- To address the limitations of convolution neural networks-based image fusion models, such as restricted global information perception, high-frequency detail preservation, and the loss function weights configuration, this article proposes a convolution and multilayer perceptron-integrated multiscale coordinate network (CM-MCNet) for high-quality infrared and visible image fusion. In the encoder of CM-McNet, a convolutional weighted permute multilayer perceptron module is introduced to enhance spatial understanding by simulating feature permutation and integrates an adaptive feature reweighting mechanism to effectively capture global information. Meanwhile, a multiscale coordinate convolution (MsCConv) module is designed, leveraging the advantages of central difference convolution to enhance the retention and expression of high-frequency details. By incorporating multiscale parallel sub-networks, MsCConv ensures the comprehensive preservation of multi-level features. Moreover, the embedded coordinate attention mechanism jointly modulates channel and spatial dimensions, enhancing complementary information while suppressing redundancy. Furthermore, a data-driven adaptive loss weighting strategy is proposed, which can dynamically adjust the contribution of supervision signals based on image feature statistics. This reduces the complexity of hyperparameter tuning while ensuring the loss function more accurately reflects the characteristics of the source images. Experimental results on the RoadScene, TNO, and M3FD public datasets demonstrate that CM-MCNet generates fused images with sharper edge preservation and more natural texture transitions. Additionally, our method achieves superior performance across various objective metrics, including information entropy, standard deviation, spatial frequency, visual information fidelity, and average gradient, outperforming existing state-of-the-art fusion methods. This work provides a novel perspective for infrared and visible image fusion and lays a solid foundation for further advancements in the field.

- References:

-

[1] LIU Jinyuan, LIN Runjia, WU Guanyao, et al. Coconet: coupled contrastive learning network with multi-level feature ensemble for multi-modality image fusion[J]. International journal of computer vision, 2024, 132(5): 1748-1775.

[2] ����, ��С�. ��������Ӧ����ǿ�Ķ�ģ̬ͼ������ָ�[J]. ��������������, 2022, 43(9): 2584-2593. LAN Xin, GU Xiaojing. Multi-modal image semantic segmentation based on domain adaptation and mutual enhancement[J]. Computer engineering and design, 2022, 43(9): 2584-2593.

[3] �����, ��־��, �����, ��. ���ڶ�ע�������Ƶĺ�����ɼ���ͼ��ҹ��Ŀ����[J]. ���⼼��, 2024, 46(12): 1371-1379. LI Ruihong, FU Zhitao, ZHANG Shaochen, et al. Nighttime object detection in infrared and visible images based on multi-attention mechanism[J]. Infrared technology, 2024, 46(12): 1371-1379.

[4] SUN Yiming, CAO Bing, ZHU Pengfei, et al. Drone-based RGB-infrared cross-modality vehicle detection via uncertainty-aware learning[J]. IEEE transactions on circuits and systems for video technology, 2022, 32(10): 6700-6713.

[5] ���ĵt, ̷ҫ, ������. ���������������������ں�ˮ��ͼ��ԭ[J]. ����ϵͳѧ��, 2023, 18(6): 1185-1196. ZHANG Xinyi, TAN Yao, XING Xianglei. Deep feature fusion for underwater-image restoration based on physical priors[J]. CAAI transactions on intelligent systems, 2023, 18(6): 1185-1196.

[6] ��־��, ������, ��, ��. ���ڶ�ģ̬ͼ����Ϣ�ı���豸����ָ��[J]. ���⼼��, 2023, 45(11): 1198-1206. ZHANG Zhichao, ZUO Leipeng, ZOU Jie, et al. Segmentation method of substation equipment infrared based on multimodal image information[J]. Infrared technology, 2023, 45(11): 1198-1206.

[7] �Ƽ, ���, �Ͻ���, ��. �������������ͷ�������ںϵĵ���ͼ��ȥ������[J]. �Զ���ѧ��, 2023, 49(4): 769-777. YANG Aiping, LIU Jin, XING Jinna, et al. Content feature and style feature fusion network for single image dehazing[J]. Acta automatica sinica, 2023, 49(4): 769-777.

[8] ���, ��÷, ���. ���ھ���������ĺ�����ɼ���ͼ���ںϷ���[J]. ������־, 2024, 45(2): 135-139. LI Jingjing, DU Mei, SUN Bin. Infrared and visible image fusion method based on convolutional neural network[J]. Laser journal, 2024, 45(2): 135-139.

[9] ZHAO Zixiang, XU Shuang, ZHANG Chunxia, et al. DIDFuse: Deep image decomposition for infrared and visible image fusion[EB/OL]. (2020-03-20) [2025-08-20]. https://arxiv.org/abs/2003.09210.

[10] LIANG Pengwei, JIANG Junjun, LIU Xianming, et al. Fusion from decomposition: A self-supervised decomposition approach for image fusion[C]//European Conference on Computer Vision. Cham: Springer Nature Switzerland, 2022: 719-735.

[11] LIU Jinyang, DIAN Renwei, LI Shutao, et al. SGFusion: A saliency guided deep-learning framework for pixel-level image fusion[J]. Information fusion, 2023, 91: 205-214.

[12] ZHAO Zixiang, BAI Haowen, ZHANG Jiangshe, et al. Cddfuse: Correlation-driven dual-branch feature decomposition for multi-modality image fusion[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver: IEEE, 2023: 5906-5916.

[13] ��Сͬ, �����, ������, ��. ����ȫ�־ֲ�Эͬ�ķǾ���ͼ��ȥ������[J]. �Զ���ѧ��, 2024, 50(7): 1-12. LUO Xiaotong, YANG Wenjin, QU Yanyun, et al. Dehazeformer: nonhomogeneous image dehazing with collaborative global-local network[J]. Acta automatica sinica, 2024, 50(7): 1-12.

[14] TANG Wei, HE Fazhi, LIU Yu. YDTR: Infrared and visible image fusion via Y-shape dynamic transformer[J]. IEEE Transactions on Multimedia, 2022, 25: 5413-5428.

[15] TANG Wei, HE Fazhi, LIU Yu, et al. DATFuse: Infrared and visible image fusion via dual attention transformer[J]. IEEE transactions on circuits and systems for video technology, 2023, 33(7): 3159-3172.

[16] MA Jiayi, YU Wei, LIANG Pengwei, et al. FusionGAN: A generative adversarial network for infrared and visible image fusion[J]. Information fusion, 2019, 48: 11-26.

[17] LIU Jinyuan, SHANG Jingjie, LIU Risheng, et al. Attention-guided global-local adversarial learning for detail-preserving multi-exposure image fusion[J]. IEEE transactions on circuits and systems for video technology, 2022, 32(8): 5026-5040.

[18] CHENG Chunyang, XU Tianyang, WU Xiaojun. MUFusion: A general unsupervised image fusion network based on memory unit[J]. Information fusion, 2023, 92: 80-92.

[19] OUYANG Daliang, HE Su, ZHANG Guozhong, et al. Efficient multi-scale attention module with cross-spatial learning[C]//ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing. Rhodes: IEEE, 2023: 1-5.

[20] HOU Qibin, ZHOU Daquan, FENG Jiashi. Coordinate attention for efficient mobile network design[C]//Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition. Virtual: IEEE, 2021: 13713-13722.

[21] TU Zhengzhong, Talebi H, ZHANG Han, et al. Maxim: Multi-axis mlp for image processing[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 5769-5780.

[22] ZHANG Hang, WU Chongruo, ZHANG Zhongyue, et al. Resnest: split-attention networks[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 2736-2746.

[23] ����ƽ, ����, ����, ��. ���ڽṹ�ز��������߶���ȼල��COVID-19�ز�CTͼ���Զ��ָ�[J]. ����ѧ��, 2023, 51(5): 1163-1171. LIU Jinping, WU Juanjuan, ZHANG Rong, et al. Toward automated segmentation of COVID-19 chest CT images based on structural reparameterization and multi-scale deep supervision[J]. Acta electronica sinica, 2023, 51(5): 1163-1171.

[24] WANG Zhou, BOVIK A C, SHEIKHJ H R, et al. Image quality assessment: from error visibility to structural similarity[J]. IEEE transactions on image processing, 2004, 13(4): 600-612.

[25] LI Hui, WU Xiaojun. CrossFuse: a novel cross attention mechanism based infrared and visible image fusion approach[J]. Information fusion, 2024, 103: 102147.

[26] XU Han, MA Jiayi, LE Zhuliang, et al. Fusiondn: a unified densely connected network for image fusion[C]//Proceedings of the AAAI Conference on Artificial Intelligence. New York: AAAI, 2020, 34(7): 12484-12491.

[27] TOET A, HOGERVORST M A. Progress in color night vision[J]. Optical engineering, 2012, 51(1): 010901-010901.

[28] LIU Jinyuan, FAN Xin, HUANG Zhanbo, et al. Target-aware dual adversarial learning and a multi-scenario multi-modality benchmark to fuse infrared and visible for object detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 5802-5811.

[29] XIE Xiangning, LIU Yuqiao, SUN Yanan, et al. BenchENAS: a benchmarking platform for evolutionary neural architecture search[J]. IEEE transactions on evolutionary computation, 2022, 26(6): 1473-1485.

[30] BROWN M, S��SSTRUNK S. Multi-spectral SIFT for scene category recognition[C]//The 24th IEEE Conference on Computer Vision and Pattern Recognition. Colorado Springs: IEEE, 2011: 177-184.

[31] SINGH S, SINGH H, BUENO G, et al. A review of image fusion: Methods, applications and performance metrics[J]. Digital signal processing, 2023, 137: 104020.

[32] SELVRAJU R R, MICHAEL C, ABIISHEK D, et al. Grad-cam: visual explanations from deep networks via gradient-based localization[C]//Proceedings of the IEEE International Conference on Computer Vision. Venice: IEEE, 2017: 618-626.

[33] ZHANG Hao, MA Jiayi. SDNet: a versatile squeeze-and-decomposition network for real-time image fusion[J]. International journal of computer vision, 2021, 129(10): 2761-2785.

[34] HUANG Zhanbo, LIU Jinyuan, FAN Xin, et al. Reconet: Recurrent correction network for fast and efficient multi-modality image fusion[C]//European Conference on Computer Vision. Cham: Springer Nature Switzerland, 2022: 539-555.

[35] WANG Di, LIU Jinyuan, LIU Risheng, et al. An interactively reinforced paradigm for joint infrared-visible image fusion and saliency object detection[J]. Information fusion, 2023, 98: 101828.

[36] XIE Xinyu, CUI Yawen, TAN Tao, et al. Fusionmamba: Dynamic feature enhancement for multimodal image fusion with mamba[J]. Visual intelligence, 2024, 2(1): 37.

[37] TANG Linfeng, YUAN Jiteng, ZHANG Hao, et al. PIAFusion: A progressive infrared and visible image fusion network based on illumination aware[J]. Information fusion, 2022, 83: 79-92.

[38] CHEN L C , ZHU Yukun, PAPANDREOU G, et al. Encoder-decoder with atrous separable convolution for semantic image segmentation[C]//Proceedings of the 15th European Conference on Computer Vision. Munich: Springer nature, 2018: 801-818.

- Similar References:

Memo

-

Last Update:

2026-01-05