[1]LIU Xin,ZENG Kui,CHEN Feng.Transformer action recognition model based on multi-query token selection mechanism[J].CAAI Transactions on Intelligent Systems,2026,21(2):410-422.[doi:10.11992/tis.202503002]

Copy

Transformer action recognition model based on multi-query token selection mechanism

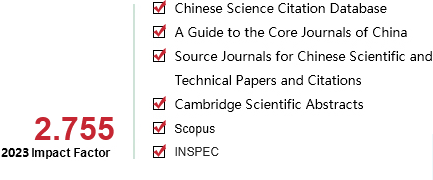

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

21

Number of periods:

2026 2

Page number:

410-422

Column:

学术论文—机器感知与模式识别

Public date:

2026-05-16

- Title:

- Transformer action recognition model based on multi-query token selection mechanism

- Keywords:

- action recognition; attention mechanism; feature fusion; temporal attention; spatial attention; dynamic features; local feature; spatio-temporal feature extraction module

- CLC:

- TP391.41

- DOI:

- 10.11992/tis.202503002

- Abstract:

- To address the limitations of ViTs (vision Transformers) in video action recognition, specifically, the inability of spatial attention to focus on shallow local features and the inaccuracy of temporal attention in capturing dynamic information, we propose a Transformer-based action recognition model incorporating a multi-query token selection mechanism. The model introduces a Local Feature Aggregation Module composed of multiple Spatiotemporal Attention Blocks, where each block employs 3D convolutions combined with channel and spatial attention to enhance the focus on shallow local features. Furthermore, a Global Spatiotemporal Feature Perception Module is constructed, consisting of several Spatiotemporal Processing Units. Each unit comprises: (1) a Hybrid Spatial Perception Module, which incorporates 3D depthwise separable convolutions before the global spatial attention mechanism to strengthen attention to local spatiotemporal neighborhoods; (2) a Temporal Attention Module with Multi-Query Token Selection, which filters features of each frame to suppress background noise and emphasize human actions; (3) a Spatiotemporal Feature Fusion Module, which efficiently integrates spatial and temporal features through sequential fusion. Experimental results on different datasets demonstrate that the proposed method outperforms baseline models.

- References:

-

[1] DOSOVITSKIY A, BEYER L, KOLESNIKOV A, et al. An image is worth 16×16 words: Transformers for image recognition at scale [EB/OL]. (2020–10–22)[2025–03–03]. https://arxiv.org/abs/2010.11929.

[2] LIU Ze, LIN Yutong, CAO Yue, et al. Swin transformer: hierarchical vision transformer using shifted windows[C]//2021 IEEE/CVF International Conference on Computer Vision. Montreal: IEEE, 2021: 10012–10022.

[3] FAN Haoqi, XIONG Bo, MANGALAM K, et al. Multiscale vision transformers[C]//2021 IEEE/CVF International Conference on Computer Vision. Montreal: IEEE, 2021: 6824–6835.

[4] ZHANG D J, LI Kunchang, CHEN Yunpeng, et al. MorphMLP: a self-attention-free, MLP-like backbone for image and video [EB/OL]. (2021–11–24)[2025–03–03]. https://arxiv.org/abs/2111.12527v1.

[5] FEICHTENHOFER C, FAN Haoqi, MALIK J, et al. SlowFast networks for video recognition[C]//2019 IEEE/CVF International Conference on Computer Vision. Seoul: IEEE, 2019: 6201–6210.

[6] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas: IEEE, 2016: 770–778.

[7] HOWARD A G, ZHU Menglong, CHEN Bo, et al. MobileNets: efficient convolutional neural networks for mobile vision applications[EB/OL]. (2017–04–17)[2025–03–03]. https://arxiv.org/abs/1704.04861.

[8] LI Xianhang, WANG Yali, ZHOU Zhipeng, et al. SmallBigNet: integrating core and contextual views for video classification[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2020: 1089–1098.

[9] ARNAB A, DEHGHANI M, HEIGOLD G, et al. ViViT: a video vision transformer[C]//2021 IEEE/CVF International Conference on Computer Vision. Montreal: IEEE, 2022: 6816–6826.

[10] BERTASIUS G, WANG Heng, TORRESANI L. Is space-time attention all you need for video understanding? [EB/OL]. (2021–02–09)[2025–03–03]. https://arxiv.org/abs/2102.05095.

[11] LIU Ze, NING Jia, CAO Yue, et al. Video swin Transformer[C]//2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 3192–3201.

[12] LI Kunchang, WANG Yali, GAO Peng, et al. UniFormer: unified Transformer for efficient spatiotemporal representation learning[EB/OL]. (2022–01–12)[2025–03–03]. https://arxiv.org/abs/2201.04676.

[13] SIMONYAN K, ZISSERMAN A. Two-stream convolutional networks for action recognition in videos[J]. Advances in neural information processing systems, 2014, 27: 568-576

[14] HORN B K P, SCHUNCK B G. Determining optical flow[J]. Artificial intelligence, 1981, 17(1-3): 185-203

[15] FEICHTENHOFER C, PINZ A, ZISSERMAN A. Convolutional two-stream network fusion for video action recognition[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas: IEEE, 2016: 1933–1941.

[16] FEICHTENHOFER C, PINZ A, WILDES R P. Spatiotemporal residual networks for video action recognition[EB/OL]. (2016–11–07)[2025–03–03]. https://arxiv.org/abs/1611.02155.

[17] WANG Limin, XIONG Yuanjun, WANG Zhe, et al. Temporal segment networks: towards good practices for deep action recognition[C]//Computer Vision–ECCV 2016. Cham: Springer International Publishing, 2016: 20–36.

[18] ZHOU Bolei, ANDONIAN A, OLIVA A, et al. Temporal relational reasoning in videos[C]//Computer Vision–ECCV 2018. Cham: Springer International Publishing, 2018: 831–846.

[19] LIN Ji, GAN Chuang, HAN Song. TSM: temporal shift module for efficient video understanding[C]//2019 IEEE/CVF International Conference on Computer Vision. Seoul: IEEE, 2020: 7082–7092.

[20] JI Shuiwang, XU Wei, YANG Ming, et al. 3D convolutional neural networks for human action recognition[J]. IEEE transactions on pattern analysis and machine intelligence, 2013, 35(1): 221-231

[21] TRAN D, BOURDEV L, FERGUS R, et al. Learning spatiotemporal features with 3D convolutional networks[C]//2015 IEEE International Conference on Computer Vision. Santiago: IEEE, 2016: 4489–4497.

[22] CARREIRA J, ZISSERMAN A. Quo vadis, action recognition? a new model and the kinetics dataset[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu: IEEE, 2017: 4724–4733.

[23] TRAN D, WANG Heng, TORRESANI L, et al. A closer look at spatiotemporal convolutions for action recognition[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 2018: 6450–6459.

[24] QIU Zhaofan, YAO Ting, MEI Tao. Learning spatio-temporal representation with pseudo-3D residual networks[C]//2017 IEEE International Conference on Computer Vision. Venice: IEEE, 2017: 5534–5542.

[25] FEICHTENHOFER C. X3D: expanding architectures for efficient video recognition[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2020: 200–210.

[26] 田枫, 卫宁彬, 刘芳, 等. 基于时空-动作自适应融合网络的油田作业行为识别[J]. 智能系统学报, 2024, 19(6): 1407-1418 TIAN Feng, WEI Ningbin, LIU Fang, et al. Oilfield operation behavior recognition based on spatio-temporal and action adaptive fusion network[J]. CAAI transactions on intelligent systems, 2024, 19(6): 1407-1418

[27] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[J]. Advances in neural information processing systems, 2017, 30: 5998-6008

[28] 陈卓超. 基于Transformer模型的行为识别研究及系统实现[D]. 北京: 北京邮电大学, 2024. CHEN Zhuochao. Research and system implementation of behavior recognition based on Transformer model[D]. Beijing: Beijing University of Posts and Telecommunications, 2024.

[29] PATRICK M, CAMPBELL D, ASANO Y M, et al. Keeping your eye on the ball: trajectory attention in video Transformers[EB/OL]. (2021–06–09)[2025–03–03]. https://arxiv.org/abs/2106.05392.

[30] LI Yanghao, WU Chaoyuan, FAN Haoqi, et al. Mvitv2: improved multiscale vision Transformers for classification and detection[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. New Orleans: IEEE, 2022: 4804–4814.

[31] LI Kuchang, WANG Yali, HE Yinan, et al. Uniformerv2: spatiotemporal learning by arming image vits with video uniformer[C]//2023 IEEE/CVF International Conference on Computer Vision. Paris: IEEE, 2023: 1632–1643.

[32] LOU Meng, ZHANG Shu, ZHOU Hongyu, et al. TransXNet: learning both global and local dynamics with a dual dynamic token mixer for visual recognition[EB/OL]. (2023–10–30)[2025–03–03]. https://arxiv.org/abs/2310.19380.

[33] CHOLLET F. Xception: deep learning with depthwise separable convolutions[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu: IEEE, 2017: 1800–1807.

[34] HU Jie, SHEN Li, SUN Gang. Squeeze-and-excitation networks[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 2018: 7132–7141.

[35] WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//Computer Vision–ECCV 2018. Cham: Springer International Publishing, 2018: 3–19.

[36] ZHENG J, REZAGHOLIZADEH M, PASSBAN P. Dynamic position encoding for Transformers[EB/OL]. (2022–04–18)[2025–03–03]. https://arxiv.org/abs/2204.08142.

[37] DONG Xiaoyi, BAO Jianmin, CHEN Dongdong, et al. CSWin transformer: a general vision transformer backbone with cross-shaped windows[C]//2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans: IEEE, 2022: 12124–12134.

[38] GOYAL R, KAHOU S E, MICHALSKI V, et al. The “something something” video database for learning and evaluating visual common sense[C]//2017 IEEE International Conference on Computer Vision. Venice: IEEE, 2017: 5843–5851.

[39] LOSHCHILOV I, HUTTER F. Stochastic gradient descent with warm restarts[C]//Proceedings of the 5th International Conference on Learning Representations. Toulon: OpenReview. net, 2017: 1–16.

[40] QIU Zhaofan, YAO Ting, NGO C W, et al. Learning spatio-temporal representation with local and global diffusion[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. Long Beach: IEEE, 2019: 12056–12065.

[41] TRAN D, WANG Heng, FEISZLI M, et al. Video classification with channel-separated convolutional networks[C]//2019 IEEE/CVF International Conference on Computer Vision. Seoul: IEEE, 2019: 352–361.

[42] KONDRATYUK D, YUAN Liangzhe, LI Yandong, et al. MoViNets: mobile video networks for efficient video recognition[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Nashville: IEEE, 2021: 16020–16030.

[43] NEIMARK D, BAR O, ZOHAR M, et al. Video transformer network[C]//2021 IEEE/CVF International Conference on Computer Vision Workshops. Montreal: IEEE, 2021: 3163–3172.

[44] SRINIVAS A, LIN T Y, PARMAR N, et al. Bottleneck transformers for visual recognition[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Nashville: IEEE, 2021: 16514–16524.

[45] BULAT A, PEREZ RUA J M, SUDHAKARAN S, et al. Space-time mixing attention for video Transformer[J]. Advances in neural information processing systems, 2021, 34: 19594-19607

[46] LI Kunchang, LI Xinhao, WANG Yi, et al. VideoMamba: state space model for efficient video understanding[C]//Computer Vision – ECCV 2024. Cham: Springer Nature Switzerland, 2024: 237–255.

[47] LI Yan, JI Bin, SHI Xintian, et al. TEA: temporal excitation and aggregation for action recognition[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2020: 909–918.

[48] LI Kunchang, LI Xianhang, WANG Yali, et al. Ct-Net: channel tensorization network for video classification[EB/OL]. (2021–06–03)[2025–03–03]. https://arxiv.org/abs/2106.01603.

[49] WANG Limin, TONG Zhan, JI Bin, et al. TDN: temporal difference networks for efficient action recognition[C]//2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Nashville: IEEE, 2021: 1895–1904.

- Similar References:

Memo

-

Last Update:

1900-01-01