[1]YIN Changsheng,YANG Ruopeng,ZHU Wei,et al.A survey on multi-agent hierarchical reinforcement learning[J].CAAI Transactions on Intelligent Systems,2020,15(4):646-655.[doi:10.11992/tis.201909027]

Copy

A survey on multi-agent hierarchical reinforcement learning

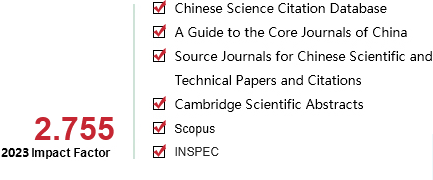

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

15

Number of periods:

2020 4

Page number:

646-655

Column:

综述

Public date:

2020-07-05

- Title:

- A survey on multi-agent hierarchical reinforcement learning

- Keywords:

- artificial intelligence; machine learning; reinforcement learning; multi-agent; summary; reinforcement learning; hierarchical reinforcement learning; application status

- CLC:

- TP18

- DOI:

- 10.11992/tis.201909027

- Abstract:

- As an important research area in the field of machine learning and artificial intelligence, multi-agent hierarchical reinforcement learning (MAHRL) integrates the advantages of the collaboration of multi-agent system (MAS) and the decision making of reinforcement learning (RL) in a general-purpose form, and decomposes the RL problem into sub-problems and solves each of them to overcome the so-called curse of dimensionality. So MAHRL offers a potential way to solve large-scale and complex decision problem. In this paper, we systematically describe three key technologies of MAHRL: reinforcement learning (RL), Semi Markov Decision Process (SMDP), multi-agent reinforcement learning (MARL). We then systematically describe four main categories of the MAHRL method from the angle of hierarchical learning, which includes Option, HAM, MAXQ and End-to-End. Finally, we end up with summarizing the application status of MAHRL in robot control, game decision making and mission planning.

- References:

-

[1] LECUN Y, BENGIO Y, HINTON G. Deep learning[J]. Nature, 2015, 521: 436-444.

[2] SILVER D, HUBERT T, SCHRITTWIESER J, et al. A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play[J]. Science, 2018, 362: 1140-1144.

[3] JADERBERG M, CZARNECKI M M, DUNNING L, et al. Human-level performance in 3D multiplayer games with population-based reinforcement learning[J]. Science, 2019, 364(6443): 859-865.

[4] LIU Siqi, LEVER G, MEREL J, HEESS N, et al. Emergent coordination through completion[EB/OL]. [2019-2-21]. https://arxiv.org/abs/1902.07151.

[5] WU Bin, FU Qiang, LIANG Jing, et al. Hierarchical macro strategy model for MOBA game AI[EB/OL]. [2018-12-19]. https://arxiv.org/abs/1812.07887v1.

[6] MNIH V, KAVUKCUOGLU K, SILVER D, et al. Playing atari with deep reinforcement learning[EB/OL]. [2013-12-19]. https://arxiv.org/abs/1312.5602.

[7] WOOLDRIDGE M. An introduction to multi-agent systems[J]. Wiley & Sons, 2011, 4(2): 125-128.

[8] GIL P, NUNES L. Hierarchical reinforcement learning using path clustering[C]//Proceedings of 8th Iberian Conference on Information Systems and Technologies. Lisaboa, Portugal, 2013: 1-6.

[9] XUE B, GLEN B. DeepLoco: dynamic locomotion skills using hierarchical deep reinforcement learning[J]. ACM transactions on graphics, 2017, 36(4): 1-13.

[10] SUTTON R S, BARTO A G. Reinforcement learning: an introduction[M]. Cambridge: MIT Press, 1998.

[11] SILVER D, SCHRITTEIESER J, SIMONYAN K, et al. Mastering the game of go without human knowledge[J]. Nature, 2017, 550(7676): 354-391.

[12] 刘全, 翟建伟, 章宗长, 等. 深度强化学习综述[J]. 计算机学报, 2018, 41(1): 1-27

LIU Quan, ZHAI Jianwei, ZHANG Zongchang, et al. A survey on deep reinforcement learning[J]. Chinese journal of computers, 2018, 41(1): 1-27

[13] HAUSKNECHT M, STONE P. Deep recurrent q-learning for patially observable mdps[EB/OL]. [2017-11-16]. https://arxiv.org/abs/1507.06527.

[14] HASSELT H V, GUEZ A, SILVER D. Deep reinforcement learning with double Q learning[EB/OL]. [2015-12-8]. https://arxiv.org/abs/1509.06461v1.

[15] RUMMERY G A, NIRNJAN M. On-line q-learning using connectionist systems[EB/OL]. [2018-2-2]. https://www.researchgate.net/publication/250611_On-Line_Q-Learning_Using_Connectionist_Systems.

[16] WATKINS C, DAYAN P. Q-learning[J]. Machine learning, 1992, 8(34): 279-292.

[17] SILVER D, LEVER G, HEESS N, et al. Deterministic policy gradient algorithms [C]//International Conference on Machine Learning 2014. Beijing, China, 2014: 387-395.

[18] MNIH V, BADIA A P, MIRZA M, et al. Asynchronous methods for deep reinforcement learning [EB/OL]. [2016-6-16]. https://arxiv.org/abs/1602.01783.

[19] SCHULMAN J, LEVINE S, ABBEEL P, et al. Trust region policy optimization [EB/OL]. [2015-2-19]. https://arxiv.org/abs/1502.05477.

[20] HEESS N, WAYNE G, SILVER D, et al. Learning continuous control policies by stochastic value gradients[EB/OL]. [2015-10-30]. https://arxiv.org/abs/1510.09142.

[21] LEVINE S, KOLTUM V. Guided policy search[EB/OL]. [2016-10-3]. https://arxiv.org/abs/1610.00529.

[22] SCHULMAN J, WOLSKI F, DHARIWAL P, et al. Proximal policy optimization algorithms[EB/OL]. [2018-9-18]. https://arxiv.org/abs/1707.06347.

[23] SCHULMAN J, MORITZ P, LEVINE S, et al. High dimensional continuous control using generalized advantage estimation [EB/OL]. [2011-11-16]. https://arxiv.org/abs/1506.024398.

[24] SUTTON R S. Dyna, an integrated architecture for learning, planning and reacting[J]. ACM SIGART bulletin, 1991, 2(4): 160-163.

[25] DING Shifei, ZHAO Xingyu, XU Xinzheng, et al. An effective asynchronous framework for small scale reinforcement learning problems[J]. Applied intelligence, 2019, 49(12): 4303-4318.

[26] ZHAO Xingyu, DING Shifei, AN Yuexuan, et al. Applications of asynchronous deep reinforcement learning based on dynamic updating weights[J]. Applied intelligence, 2019, 49(2): 581-591.

[27] ZHAO Xingyu, DING Shifei, AN Yuexuan, et al. Asynchronous reinforcement learning algorithms for solving discrete space path planning problems[J]. Applied intelligence, 2018, 48(12): 4889-4904.

[28] SUTTON R S, PRECUP D, SINGH S R. Between MDPs and Semi-MDPs: a framework for temporal abstraction in reinforcement learning[J]. Artificial intelligence, 1999, 112(1-2): 181-211.

[29] PRECUP D, SUTTON R S. Multi-time models for temporally abstract planning[C]// Proceedings of the 1997 Conference on Advances in Neural Information Processing Systems 10. Cambridge, United States, 1998: 1050-1056.

[30] PRECUP D. Temporal abstraction in reinforcement learning. [D]. Amherst: University of Massachusetts, USA, 2000.

[31] TANG Zhentao, ZHAO Dongbin, ZHU Yuanheng. Reinforcement learning for build-order production in StarCraft II [C]//8th International Conference on Information Science and Technology. Istanbul, Turkey. 2018.

[32] PARR R. Hierarchical control and learning for markov decision processes[D]. Berkeley: University of California, 1998.

[33] KULKARNI T D, NARASIMHAN K R, SAEEDI A, et al. Hierarchical deep reinforcement learning: integrating temporal abstraction and intrinsic motivation[EB/OL]. [2016-4-20]. https://arxiv.org/abs/1604.06057.

[34] DIETTERICH T G. Hierarchical reinforcement learning with the MAXQ value function decomposition[J]. Journal of artificial intelligence research, 2000, 13: 227-303.

[35] MENACHE I, MARMOR S, SHIMKIN N. Q-Cut: dynamic discovery of sub-goals in reinforcement learning[J]. Lecture notes in computer science 2430.2002: 295-306.

[36] DRUNNOND C. Accelerating reinforcement learning by composing solutions of automatically identified subtasks[J]. Journal of artificial intelligence research, 2002, 16: 59-104.

[37] HENGST B. Discovering hierarchy in reinforcement learning[D]. Sydney: University of New South Wales, Australia, 2003.

[38] UTHER W T B. Tree based hierarchical reinforcement learning[D]. Pittsburgh: Carnegie Mellon University, USA, 2002.

[39] PIERRE B, JEAN H. The option-critic architecture[C]//Proceedings of 31th AAAI Conference on Artifical Intelligence. San Francisco, USA, 2017: 1726-1734.

[40] VEZHNEVETS A S, OSINDERO S, SCHAUL T, et al. Feudal networks for hierarchical reinforcement learning[C]//Proceedings of 34th International Conference on Machine Learning. Sydney, Australia, 2017: 3540-3549.

[41] PONSEN M J V, SPRONCK P, AHA D W. Automatically acquiring domain knowledge for adaptive game AI using evolutionary learning[C]//Conference on Innovative Applications of Artificial Intelligence. Pittsburgh, Pennsylvania, 2005: 1535-1540.

[42] WEBER B G, ONTANON S. Using automated replay annotation for case-based planning in games[C]//18th International Conference on Case-based Reasoning. Alessandria, Italy, 2010: 15-24.

[43] WEBER B G, MAWHORTER P, MATEAS M, et al. Reactive planning idioms for multi-scale game AI[C]// Conference on Computational Intelligence and Games, Maastricht, The Netherlands, 2010: 115-122.

[44] SONG Y, LI Y, LI C. Initialization in reinforcement learning for mobile robots path planning[J]. Control theory & applications, 2012, 29(12): 1623-1628.

[45] LIU Chunyang, TAN Yingqing, LIU Changan, MA Yingwei. Application of multi-Agent reinforcement learning in robot soccer[J]. Acta electronica sinica, 2010, 38(8): 1958-1962.

[46] DUAN Yong, CUI Baoxia, XU Xinhe. Multi-agent reinforcement learning and its application role assignment of robot soccer[J]. Control theory & app1ications, 2009, 26(4): 371-376.

[47] SYNNAEVE G, BESSIERE P. A bayesian model for RTS units control applied to starcraft[J]. IEEE transactions on computational intelligence and AI in games, 2011, 3(1): 83-86.

[48] SURDU J R, KITTKA K. Deep green: commander’s tool for COA’s concept[C]//Computing, Communications and Control Technologies 2008, Orlando, Florida, USA, 2008.

[49] ERNEST N, CARROLL D, SCHUMACHER C, et al. Genetic fuzzy based artificial intelligence for unmanned combat aerial vehicle control in simulated air combat missions[J]. Journal of denfense management, 2016, 6(1): 1-7.

[50] DERESZYNSKI E, HOSTETLER J, FERN A, et al. Learning probabilistic behavior models in real-time strategy games[C]//Proc of the 7th AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment, Stanford, USA, 2011: 20-25.

[51] 胡桐清, 陈亮. 军事智能辅助决策的理论与实践[J]. 军事系统工程, 1995(C1): 3-10

HU Tongqing, CHEN Liang. Theory and practice of military intelligence assistant decision[J]. Military operations research and systems engineering, 1995(C1): 3-10

[52] 朱丰, 胡晓峰. 基于深度学习的战场态势评估综述与研究展望[J]. 军事运筹与系统工程, 2016, 30(3): 22-27

ZHU Feng, HU Xiaofeng. Overview and research prospect of battlefield situation assessment based on deep learning[J]. Military operations research and systems engineering, 2016, 30(3): 22-27

[53] TIAN Yuandong, GONG Quchengg, SHANG Wenling, et al. ELF: an extensive, lightweight and flexible research platform for real-time strategy games [C]//31st Conference and Workshop on Neural Information Processing Systems, California, USA, 2017: 2656-2666.

[54] MEHTA M, ONTANOS S, AMUNDESEN T, et al. Authoring behaviors for games using learning from demonstration[C]//Proc of the 8th Intenational Conference on Case-based Reasoning, Berlin, Heidelberg, 2009: 12-20.

[55] JUSTESEN N, RISI S. Learning macromanagement in StarCraft from replays using deep learning[C]// IEEE’s 2017 Conference on Computational Intelligence in Games, New York, USA. 2017.

[56] WU Huikai, ZHANG Junge, HUANG Kaiqi. MSC: A dataset for macro-management in StarCraft II [DB/OL]. [2018-05-31]. http://cn.arxiv.org/pdf/1710.03131v1.

[57] BATO A G, MAHADEVAN S. Recent advances in hierarchical reinforcement learning[J]. Discrete event dynamic systems, 2013, 13(4): 341-379.

[58] TIMOTHY P L, JONATHAN J H, PRITZEL A, et al. Continous control with deep reinforcement learning[EB/OL]. [2015-11-18]. https://arxiv.org/abs/1509.02971.

[59] DIBIA V, DEMIRALP C. Data2Vis automatic generation of data visualizations using sequence to sequence recurrent neural networks [EB/OL]. [2018-11-2]. https://arxiv.org/abs/1804.03126.

[60] SUSHIL J L, LIU Siming. multi-objective evolution for 3D RTS micro [EB/OL]. [2018-3-8]. https://arxiv.org/abs/1803.02943.

[61] PENG Peng, WEN Ying, YANG Yaodong, et al. Multiagent bidirectionally-coordinated nets: emergence of human-level coordination in learning to play StarCraft combat games[EB/OL]. [2018-05-31]. http://cn.arxiv.org/pdf/1703.10069v4.

[62] SHAO Kun, ZHU Yuanheng, ZHAO Dongbin. StarCraft micromanagement with reinforcement learning and curriculum transfer learning[J]. IEEE transactions on emerging topics in computational intelligence, 2018(99): 1-12.

[63] 李耀宇, 朱一凡, 杨峰. 基于逆向强化学习的舰载机甲板调度优化方案生成方法[J]. 国防科技大学学报, 2013, 35(4): 171-175

LI Yaoyu, ZHU Yifan, YANG Fan. Inverse reinforcement learning based optimal schedule generation approach for carrier aircraft on flight deck[J]. Journal of national university of defense technology, 2013, 35(4): 171-175

[64] 陈希亮, 张永亮. 基于深度强化学习的陆军分队战术决策问题研究[J]. 军事运筹与系统工程, 2017, 31(3): 20-27

CHEN Xiliang, ZHANG Yongliang. Research on tactical decision of army units based on deep reinforcement learning[J]. Military operations research and systems engineering, 2017, 31(3): 20-27

[65] 乔永杰, 王欣九, 孙亮. 陆军指挥所模型自主生成作战计划时间参数的方法[J]. 中国电子科学研究院学报, 2017, 12(3): 278-284

QIAO Yongjie, WANG Xinjiu, SUN Liang. A Method for Army command post to auto-Generate combat time scheduling[J]. Journal of china academy of electronics and information technology, 2017, 12(3): 278-284

[66] DING Shifei, DU Wei, ZHAO Xingyu, et al. A new asynchronous reinforcement learning algorithm based on improved parallel PSO[J]. Applied intelligence, 2019, 49(12): 4211-4222.

[67] ZHENG Yanbin, LI Bo, AN Deyu, et al. Multi-agent path planning algorithm based on hierarchical reinforcement learning and artificial potential field[J]. Journal of computer applications, 2015, 35(12): 3491-3496.

[68] 王冲, 景宁, 李军, 等. 一种基于多Agent强化学习的多星协同任务规划算法[J]. 国防科技大学学报, 2011, 33(1): 53-58

WANG Chong, JING Ning, LI Jun, et al. An algorithm of cooperative multiple satellites mission planning based on multi-agent reinforcement learning[J]. Journal of national university of defense technology, 2011, 33(1): 53-58

- Similar References:

Memo

-

Last Update:

2020-07-25