[1]CHEN Pei,JING Liping.Word representation learning model using matrix factorization to incorporate semantic information[J].CAAI Transactions on Intelligent Systems,2017,12(5):661-667.[doi:10.11992/tis.201706012]

Copy

Word representation learning model using matrix factorization to incorporate semantic information

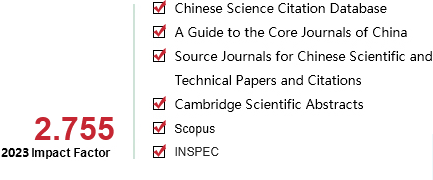

CAAI Transactions on Intelligent Systems[ISSN 1673-4785/CN 23-1538/TP] Volume:

12

Number of periods:

2017 5

Page number:

661-667

Column:

学术论文―自然语言处理与理解

Public date:

2017-10-25

- Title:

- Word representation learning model using matrix factorization to incorporate semantic information

- Keywords:

- natural language processing; word representation; matrix factorization; semantic information; knowledge base

- CLC:

- TP391

- DOI:

- 10.11992/tis.201706012

- Abstract:

- Word representation plays an important role in natural language processing and has attracted a great deal of attention from many researchers due to its simplicity and effectiveness. However, traditional methods for learning word representations generally rely on a large amount of unlabeled training data, while neglecting the semantic information of words, such as the semantic relationship between words. To sufficiently utilize knowledge bases that contain rich semantic word information in existing fields, in this paper, we propose a word representation learning method that incorporates semantic information (KbEMF). In this method, we use matrix factorization to incorporate field knowledge constraint items into a learning word representation model, which identifies words with strong semantic relationships as being relatively approximate to the obtained word representations. The results of word analogy reasoning tasks and word similarity measurement tasks obtained using actual data show the performance of KbEMF to be superior to that of existing models.

- References:

-

[1] TURIAN J, RATINOV L, BENGIO Y. Word representations:a simple and general method for semi-supervised learning[C]//Proceedings of the 48th Annual Meeting of the Association for Computational Linguistics. Uppsala, Sweden, 2010:384-394.

[2] LIU Y, LIU Z, CHUA T S, et al. Topical word embeddings[C]//Association for the Advancement of Artificial Intelligence. Austin Texas, USA, 2015:2418-2424.

[3] MAAS A L, DALY R E, PHAM P T, et al. Learning word vectors for sentiment analysis[C]//Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics. Portland Oregon, USA, 2011:142-150.

[4] DHILLON P, FOSTER D P, UNGAR L H. Multi-view learning of word embeddings via cca[C]//Advances in Neural Information Processing Systems. Granada, Spain,2011:199-207.

[5] BANSAL M, GIMPEL K, LIVESCU K. Tailoring continuous word representations for dependency parsing[C]//Meeting of the Association for Computational Linguistics. Baltimore Maryland, USA, 2014:809-815.

[6] HUANG E H, SOCHER R, MANNING C D, et al. Improving word representations via global context and multiple word prototypes[C]//Meeting of the Association for Computational Linguistics. Jeju Island, Korea, 2012:873-882.

[7] MNIH A, HINTON G. Three new graphical models for statistical language modelling[C]//Proceedings of the 24th International Conference on Machine Learning. New York, USA, 2007:641-648.

[8] MNIH A, HINTON G. A scalable hierarchical distributed language model[C]//Advances in Neural Information Processing Systems. Vancouver, Canada, 2008:1081-1088.

[9] BENGIO Y, DUCHARME R, VINCENT P, et al. A neural probabilistic language model[J]. Journal of machine learning research, 2003, 3(02):1137-1155.

[10] COLLOBERT R, WESTON J, BOTTOU L, et al. Natural language processing (almost) from scratch[J]. Journal of machine learning research, 2011, 12(8):2493-2537.

[11] MIKOLOV T, CHEN K, CORRADO G, ET AL. Efficient estimation of word representations in vector space[C]//International Conference on Learning Representations. Scottsdale, USA,2013.

[12] BAIN J, Gao B, Liu T Y. Knowledge-powered deep learning for word embedding[C]//Joint European Conference on Machine Learning and Knowledge Discovery in Databases. Springer, Berlin, Heidelberg, 2014:132-148.

[13] LI Y, XU L, TIAN F, ET AL. Word embedding revisited:a new representation learning and explicit matrix factorization perspective[C]//International Conference on Artificial Intelligence. Buenos Aires, Argentina, 2015:3650-3656.

[14] LEVY O, GOLDBERG Y. Neural word embedding as implicit matrix factorization[C]//Advances in Neural Information Processing Systems. Montreal Quebec, Canada, 2014:2177-2185.

[15] PENNINGTON J, SOCHER R, MANNING C. Glove:global vectors for word representation[C]//Conference on Empirical Methods in Natural Language Processing. Doha, Qatar, 2014:1532-1543.

[16] BIAN J, GAO B, LIU T Y. Knowledge-powered deep learning for word embedding[C]//Joint European Conference on Machine Learning and Knowledge Discovery in Databases. Berlin, Germany, 2014:132-148.

[17] XU C, BAI Y, BIAN J, et al. Rc-net:a general framework for incorporating knowledge into word representations[C]//Proceedings of the 23rd ACM International Conference on Conference on Information and Knowledge Management. Shanghai, China,2014:1219-1228.

[18] YU M, DREDZE M. Improving lexical embeddings with semantic knowledge[C]//Meeting of the Association for Computational Linguistics. Baltimore Maryland, USA,2014:545-550.

[19] LIU Q, JIANG H, WEI S, et al. Learning semantic word embeddings based on ordinal knowledge constraints[C]//The 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference of the Asian Federation of Natural Language Processing. Beijing, China, 2015:1501-1511.

[20] FARUQUI M, DODGE J, JAUHAR S K, et al. Retrofitting word vectors to semantic lexicons[C]//The 2015 Conference of the North American Chapter of the Association for Computational Linguistics. Colorado, USA, 2015:1606-1615.

[21] LEE D D, SEUNG H S. Algorithms for non-negative matrix factorization[C]//Advances in Neural Information Processing Systems.Vancouver, Canada, 2001:556-562.

[22] MNIH A, SALAKHUTDINOV R. Probabilistic matrix factorization[C]//Advances in Neural Information Processing Systems. Vancouver, Canada, 2008:1257-1264.

[23] SREBRO N, RENNIE J D M, JAAKKOLA T. Maximum-margin matrix factorization[J]. Advances in neural information processing systems, 2004, 37(2):1329-1336.

[24] LUONG T, SOCHER R, MANNING C D. Better word representations with recursive neural networks for morphology[C]//Seventeenth Conference on Computational Natural Language Learning. Sofia, Bulgaria,2013:104-113.

[25] FINKELSTEIN R L. Placing search in context:the concept revisited[J]. ACM transactions on information systems, 2002, 20(1):116-131.

- Similar References:

Memo

-

Last Update:

2017-10-25